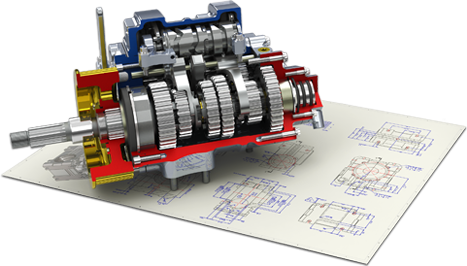

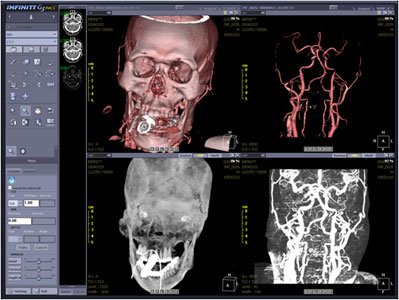

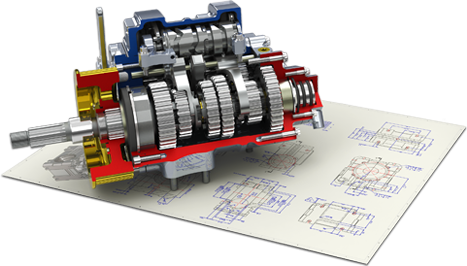

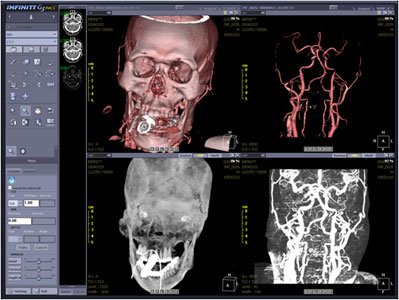

Exemples d'applications 3D : jeu vidéo (gauche), CAO (centre), imagerie médicale (droite).

3D models are ubiquitous in many fields:

Some examples of these applications are presented below.

Exemples d'applications 3D : jeu vidéo (gauche), CAO (centre), imagerie médicale (droite).

The creation and manipulation of 3D content remain, however, complex and costly:

Generative AI-based automatic tools are beginning to emerge, but remain highly difficult to control and with a significant environmental cost. They are still largely experimental for real-world productions. Controllability, quality, and efficiency remain major challenges.

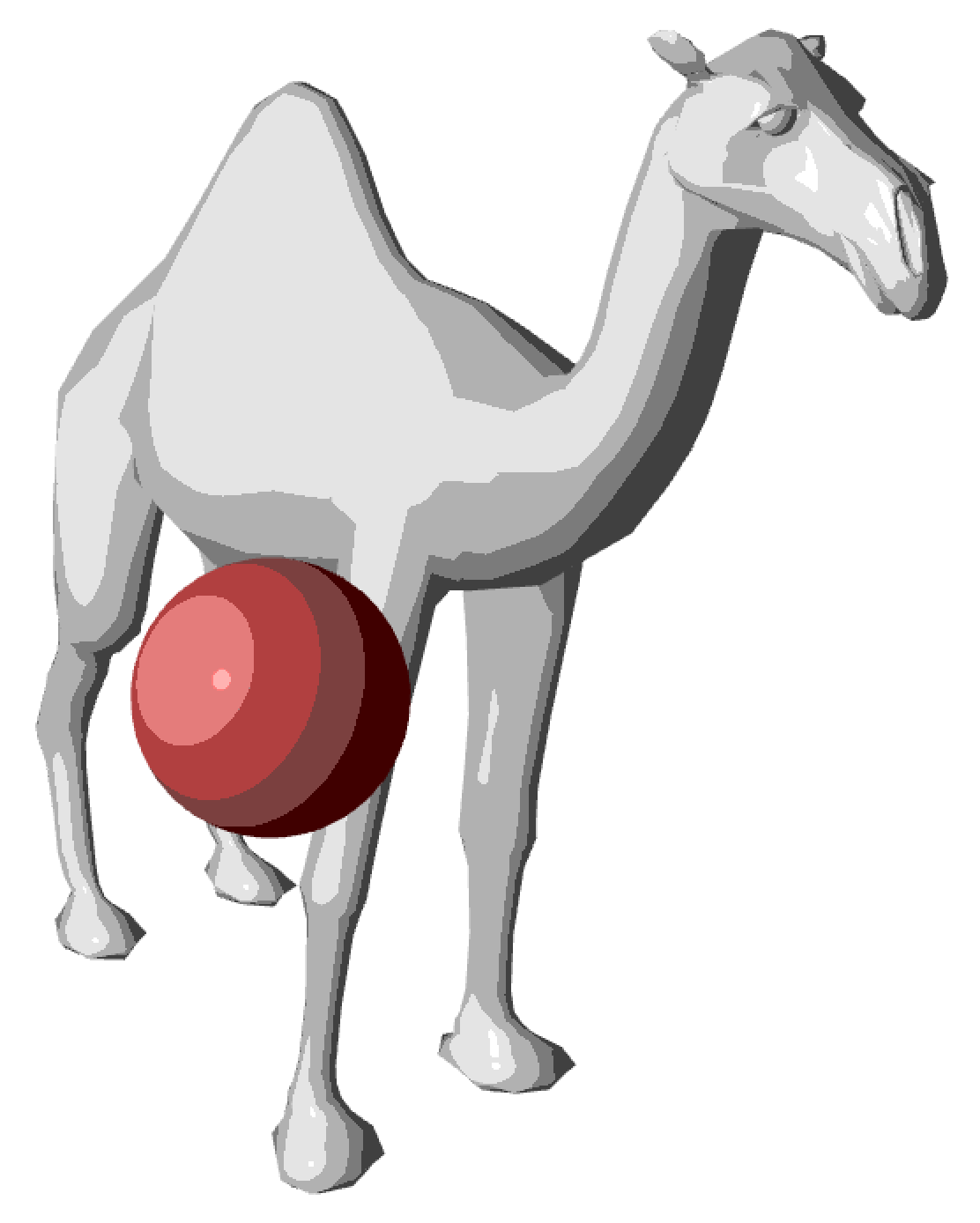

The computer graphics (Computer Graphics) designates the set of sciences and techniques enabling the generation and manipulation of visual data:

Applications are numerous: entertainment, design, simulation of natural phenomena, medical, biological, etc.

Computer graphics is structured around three major domains :

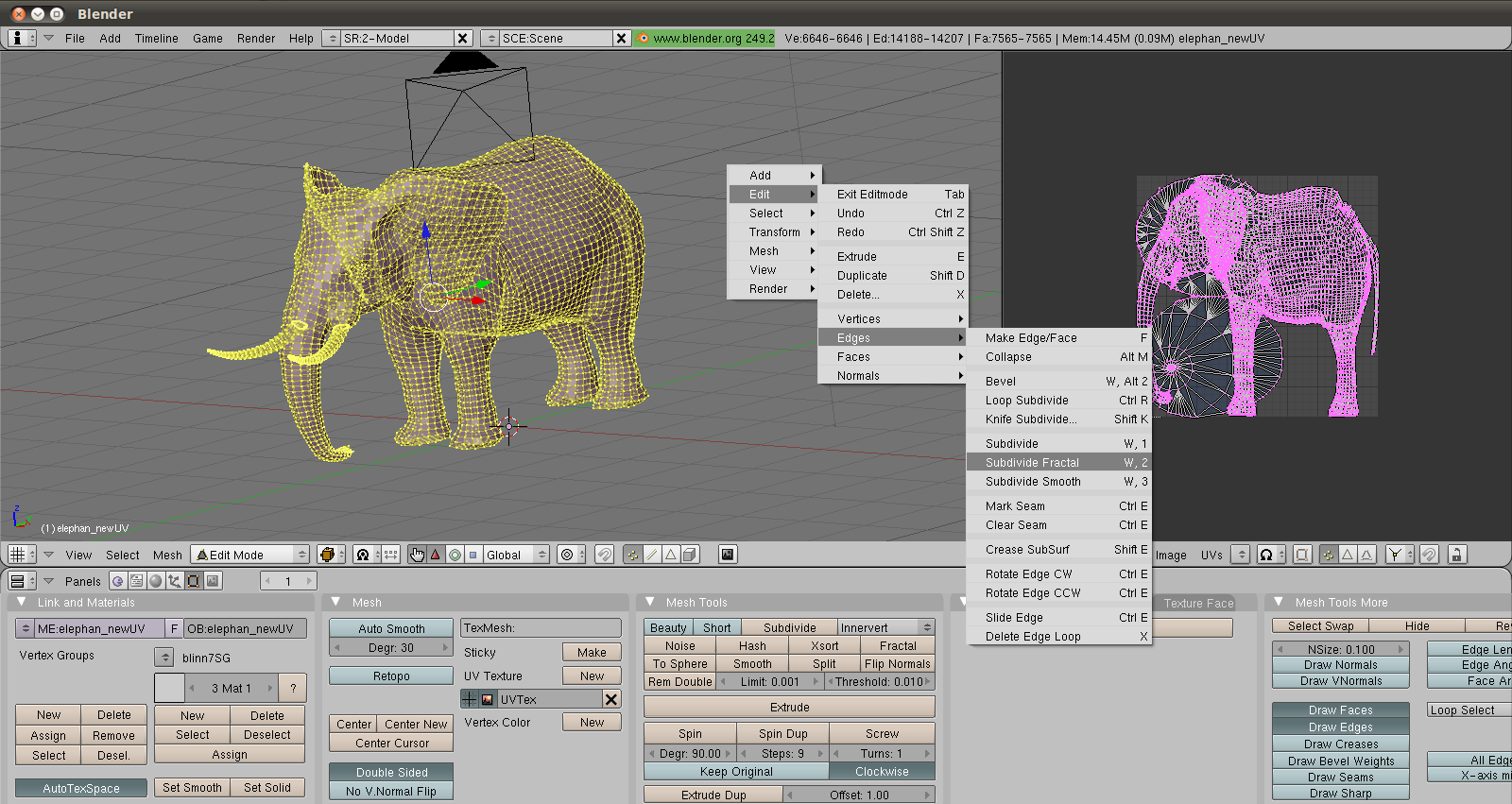

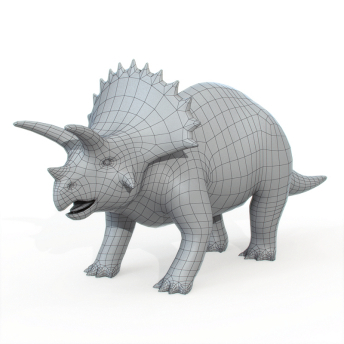

Modeling (Modeling) : creation and representation of 3D shapes. This covers the design of the geometry of objects (their shape, their structure) as well as their visual attributes (textures, materials). Modeling can be manual (an artist using software such as Blender or Maya) or procedural (algorithmic generation of shapes).

Animation : moving objects and characters over time. Animation encompasses both cinematic techniques (explicit movement of objects frame-by-frame or by interpolation of key poses) and physical techniques (simulation of forces, gravity, collisions, fluids, cloth, etc., which automatically generate motion from physical laws).

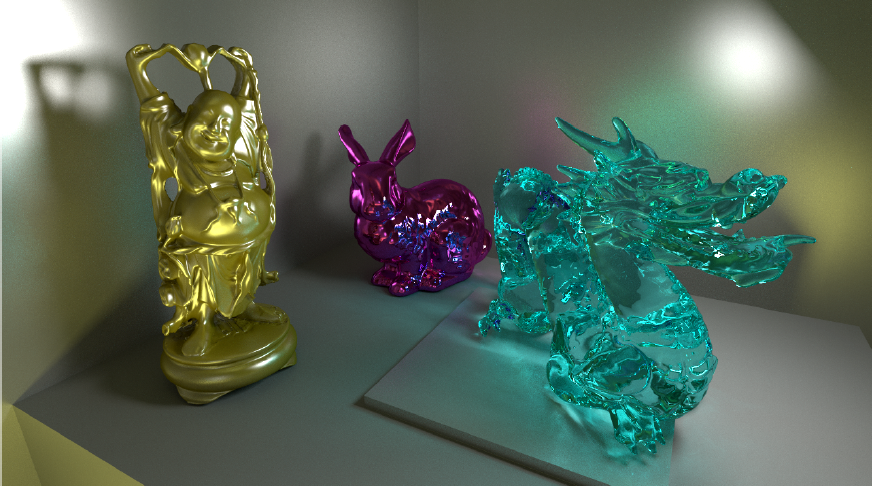

Image Synthesis / Rendering (Rendering) : generation of 2D images from a 3D scene. Rendering takes as input the description of geometry, materials, lights and the camera, then computes the resulting image. One distinguishes the rendering real-time (interactive, typically \(\geq\) 25-60 frames per second, used in video games and visualization) and the rendering offline (photorealistic, potentially taking from a few seconds to several hours per image, used in cinema and architecture).

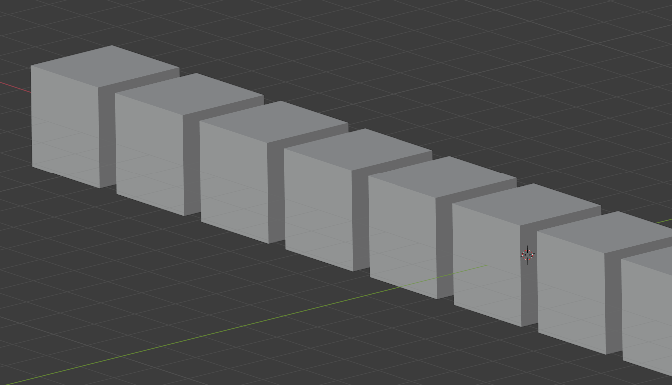

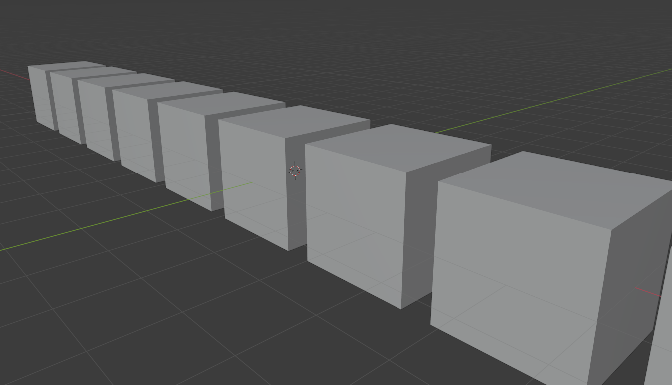

These three domains are illustrated below.

Modélisation (gauche), animation (centre) et rendu (droite).

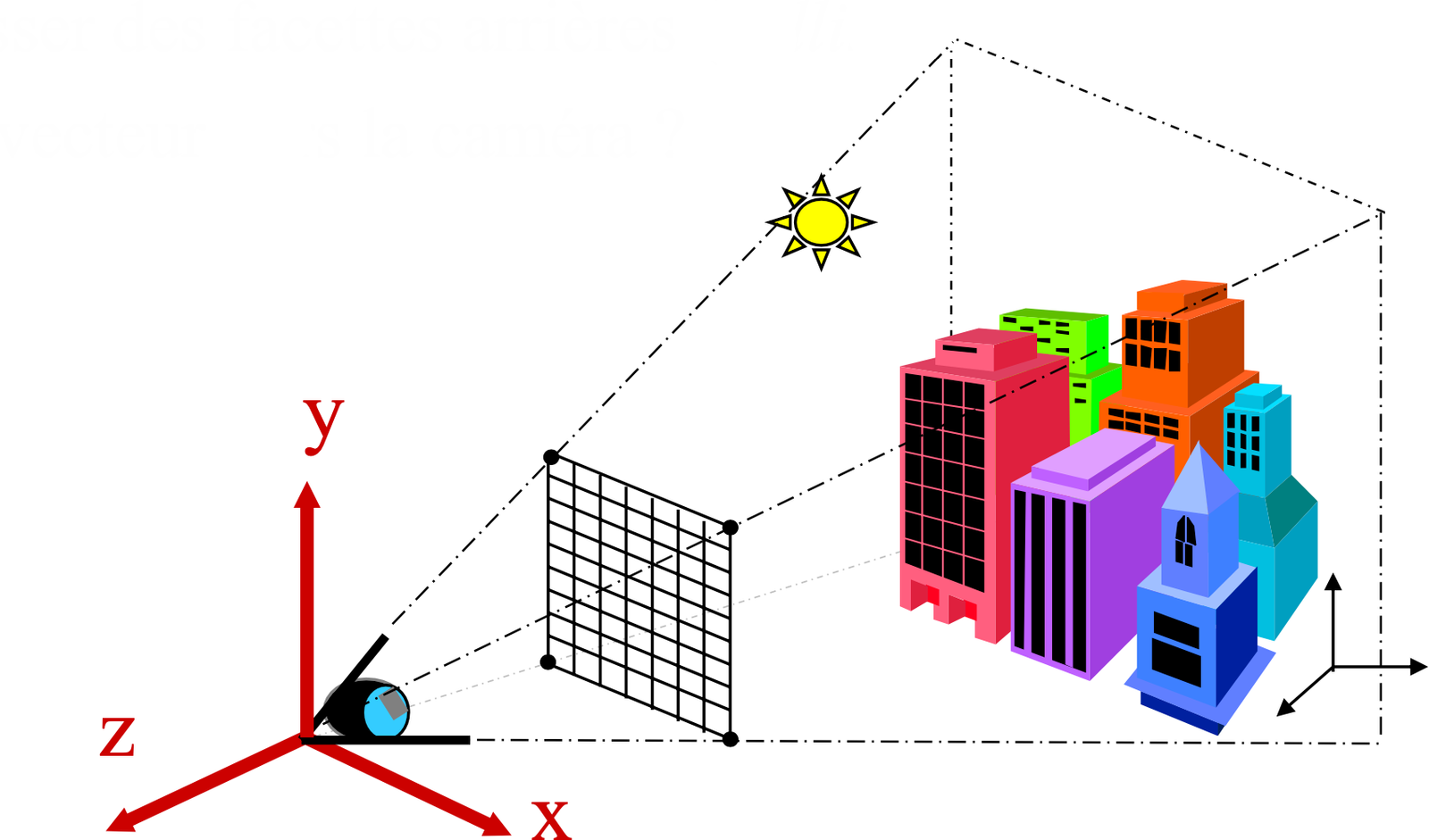

A 3D scene is described by three fundamental components:

3D Model: a surface or a volume representing the objects of the scene. Each object possesses geometry (shape) and visual attributes (color, material, texture). The scene may contain one or more objects, each positioned and oriented in a common 3D space called world space (world space).

Light Source: one or more light sources illuminating the scene. A light source is characterized by its position (or direction), its intensity and its color. Common types include point lights (emission from a point), directional lights (parallel rays, simulating the sun), and area lights (emission from a surface, for softer shadows). The interaction between light and the materials of the objects determines the visual appearance of the scene.

Camera: the viewpoint from which the scene is observed. The camera is defined by its position in space, its orientation (view direction), and its optical parameters (field of view, focal length, aspect ratio). It determines which objects are visible and how they are projected onto the final image.

The output of the rendering process is a 2D image corresponding to what the camera “sees” of the 3D scene. The following diagram summarizes the arrangement of these components.

A fundamental challenge in computer graphics is to give the illusion of depth and volume on a display that is intrinsically a 2D medium. Several visual phenomena contribute to this perception.

Far objects appear smaller than nearby objects. Two parallel lines in the scene converge toward a vanishing point on the image. This effect is directly related to the perspective projection performed by the camera (see the section on generalized coordinates).

Projection orthographique (gauche) vs projection perspective (droite).

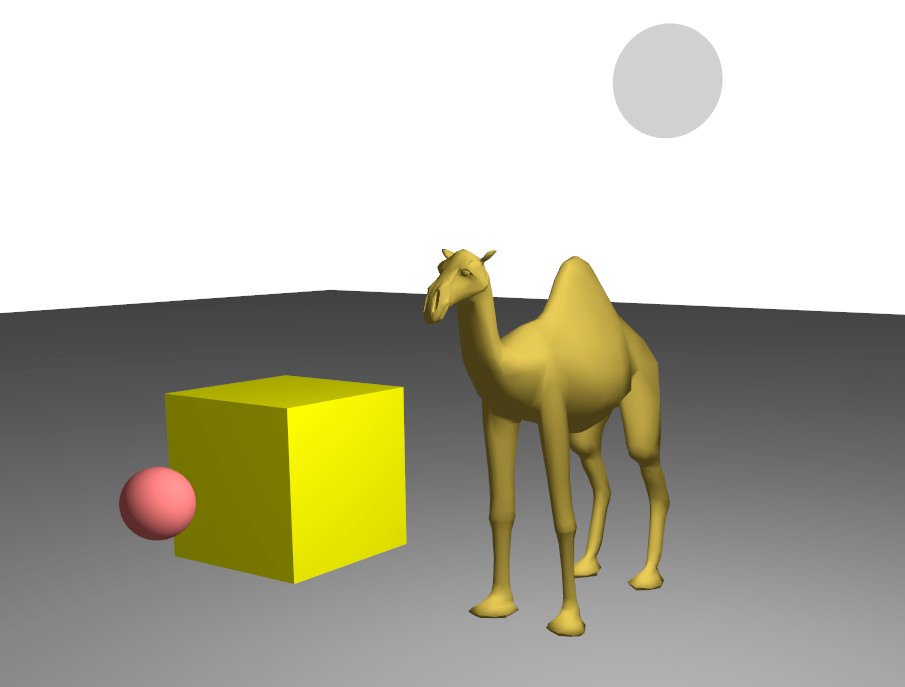

The color of a point on the surface varies according to the orientation of that surface relative to the light source. A surface facing the light appears bright, while a surface turned away from the light appears dark. This gradient of brightness, called shading (shading), provides essential cues about the curvature and volume of objects. By contrast, an object displayed with a uniform color (no shading) appears flat, like a disk rather than a sphere.

Couleur uniforme (gauche) vs illumination diffuse (droite) : l'ombrage révèle la géométrie.

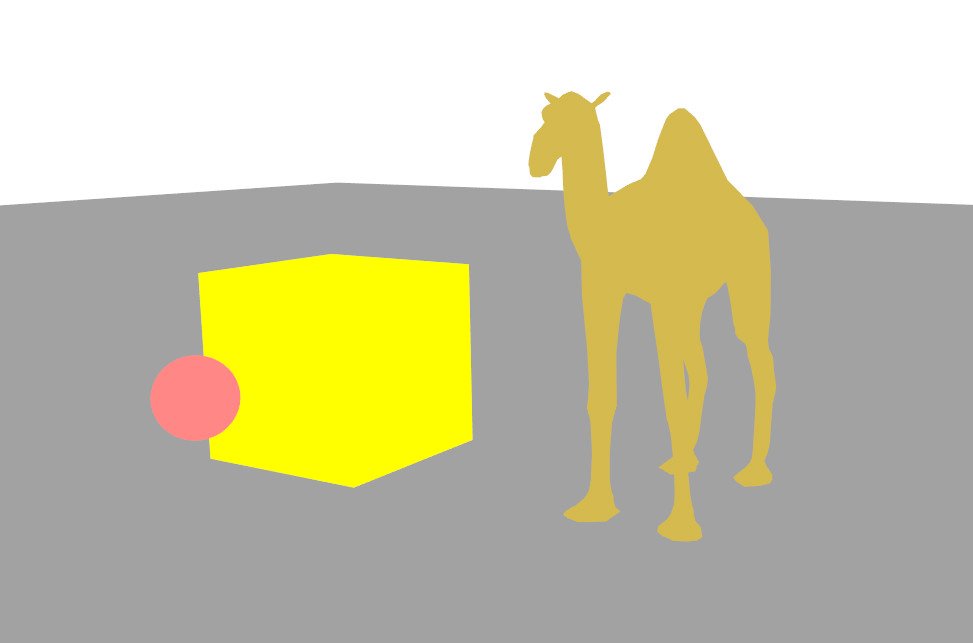

Nearby objects occlude objects located behind them. This simple yet powerful phenomenon provides a direct cue to the depth ordering of objects in the scene.

The shadow cast by an object onto another object or onto the ground visually anchors the object in the scene and provides information about its relative position and the direction of the light.

An image is a 2D grid of pixels of dimension \(N_x \times N_y\).

Other common formats :

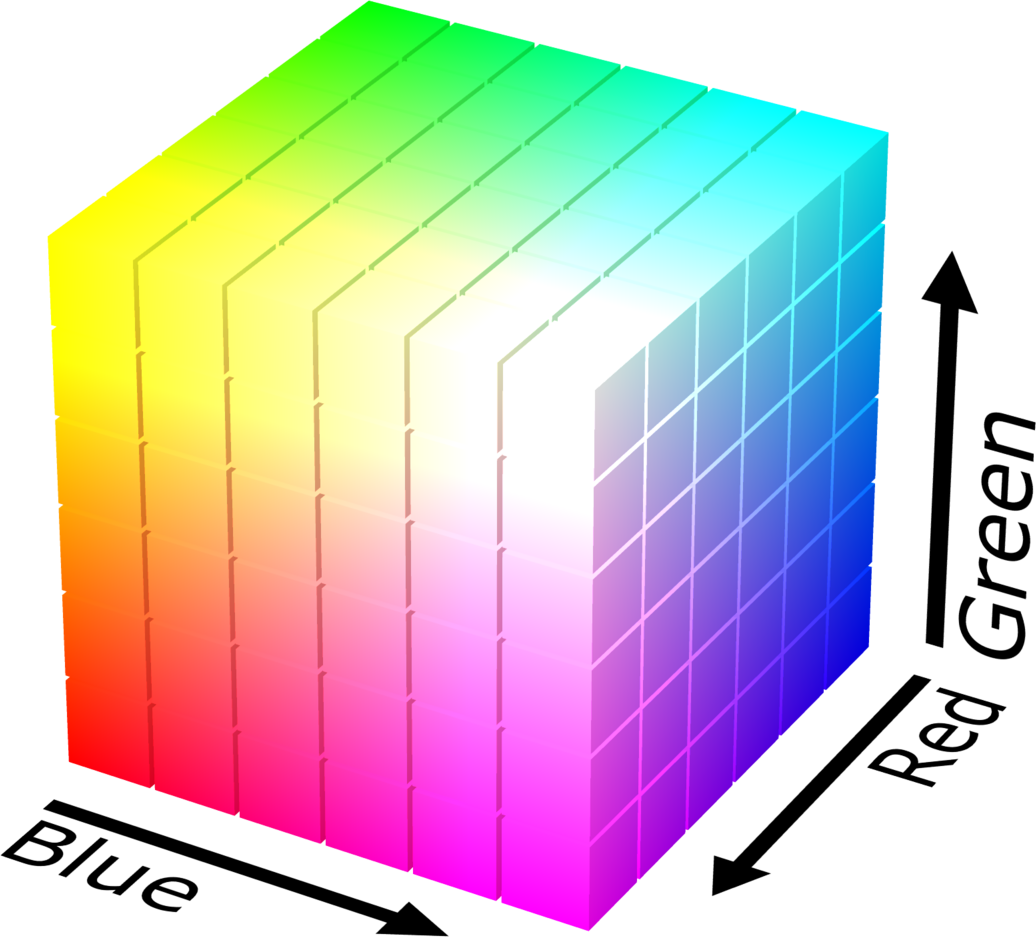

Note : the RGB space is not perceptually uniform (two colors close in RGB distance are not necessarily visually close). The RGB cube is shown in the figure below.

A 3D object can be represented in two fundamental ways:

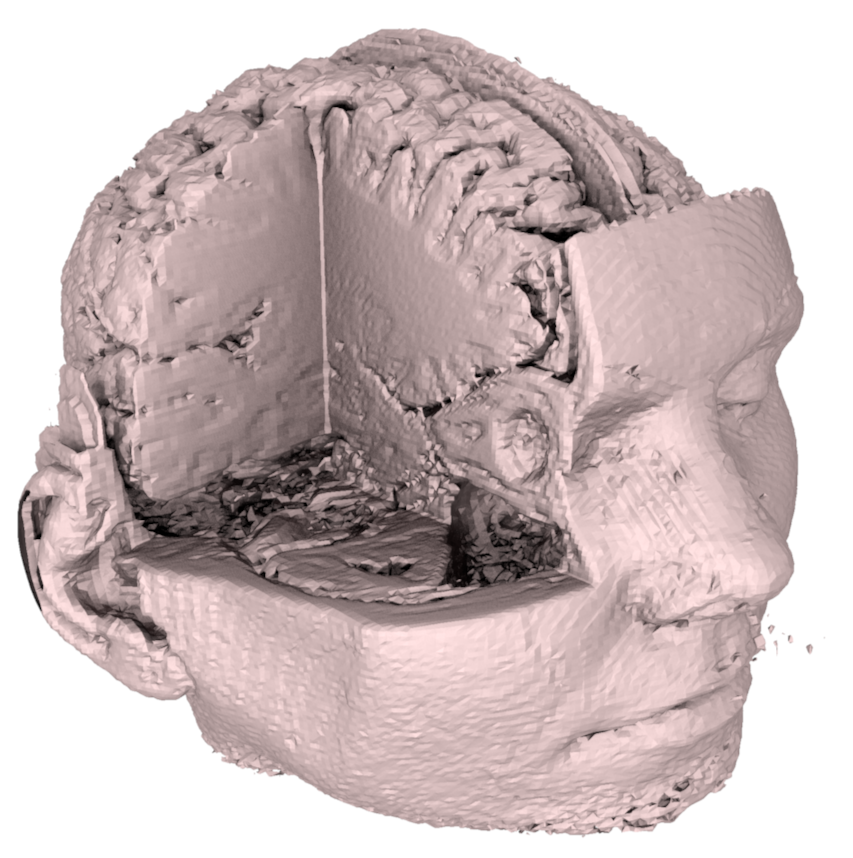

Volume : complete description of the object, including the interior. For example, one can associate a density, a temperature or any other physical attribute at every point of the volume. This representation is indispensable in medical imaging (MRI, CT scanner), in fluid simulation (smoke, clouds) and in materials physics. However, it is very memory-intensive: a volume with a resolution of \(256^3\) already contains more than 16 million voxels (volumetric pixel).

Surface : only the exterior and visible part of the object. Only the “skin” of the object is described, with no information about the interior. This representation is much more memory-efficient and, above all, much more efficient for rendering on the GPU, since only the surface contributes to the final image.

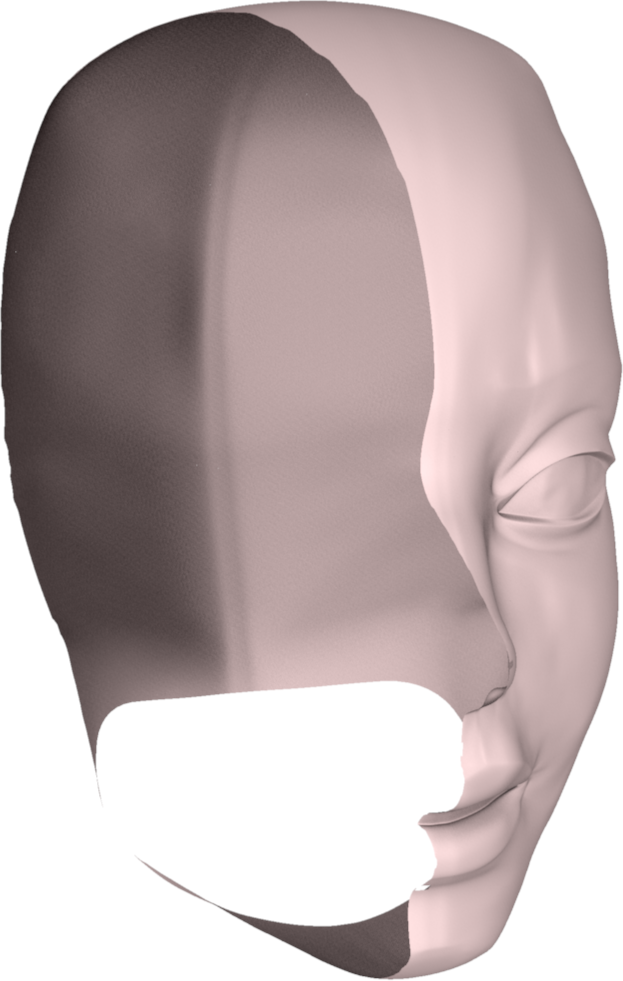

In real-time computer graphics, representations by surface are predominantly used. Volumetric representations are reserved for specific cases (atmospheric effects, medical data, physical simulation). The following figure compares these two approaches.

Représentation volumique (gauche) vs représentation surfacique (droite).

There are two fundamental families of mathematical description of a surface:

The two types of representation are compared in the diagram below.

Surface explicite paramétrique (gauche) vs surface implicite (droite).

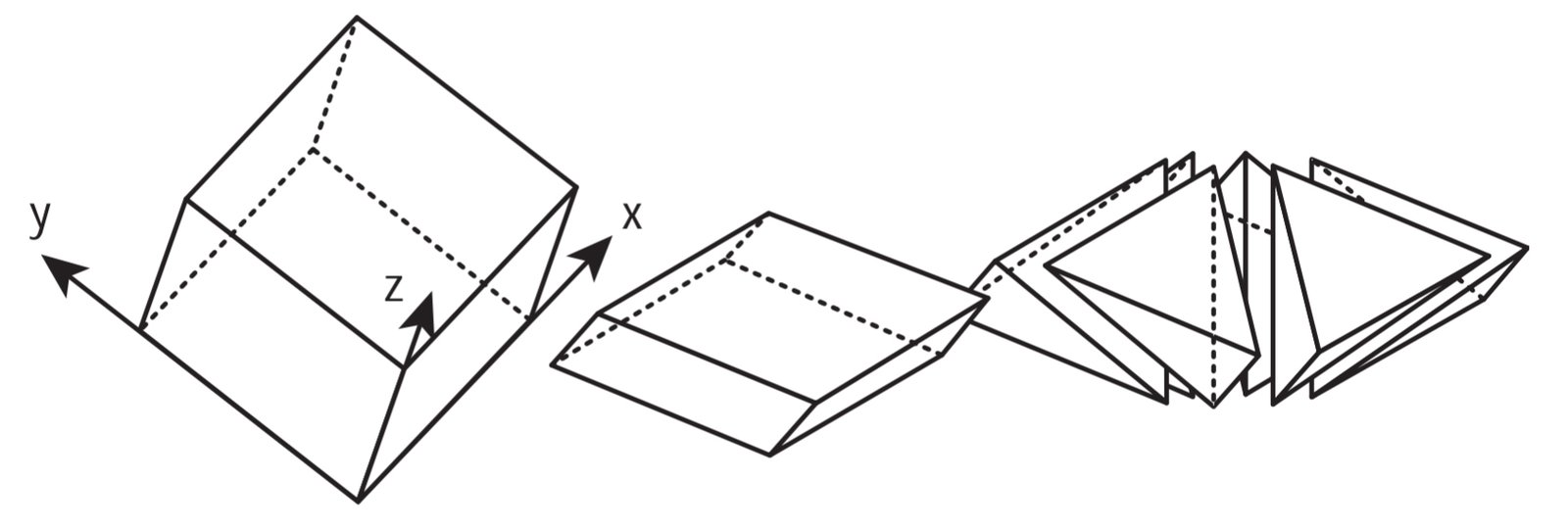

In practice, for complex shapes such as a character or a vehicle, no simple analytical formula can describe the surface. We then resort to discrete approximations.

In practice, arbitrary surfaces are rarely described by a single analytic function. Piecewise approximations are used with the aid of primitives and discrete elements.

The ideal properties of a description are:

Examples of models:

Graphics cards are specialized for rendering of triangular meshes.

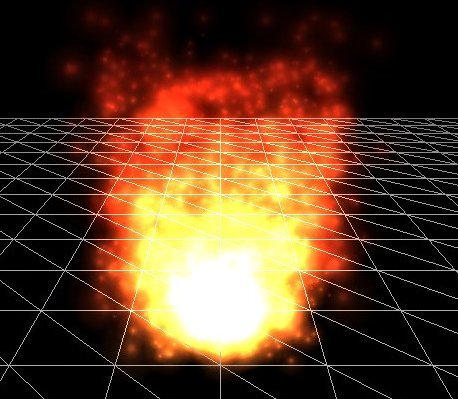

Ray tracing simulates the physical path of light in the scene. The basic principle is as follows: for each pixel of the image, a ray is cast from the camera through this pixel, and we search for the first object in the scene that this ray intersects. Once the intersection point is found, its color is computed based on the lighting, the material, and possibly reflections and refractions (by recursively launching new rays).

In practice, rays are often traced in the opposite direction of the light (from the camera toward the scene), because the vast majority of rays emitted by a light source never reach the camera. This inverse tracing is much more efficient.

Advantages:

Disadvantages:

The principle is summarized in the following diagram.

The rasterization approach adopts a radically different strategy from ray tracing. Instead of starting from each pixel and searching which object is visible, we start from each object (triangle) and determine which pixels it covers. This approach assumes that the scene is composed of triangles. The process unfolds in two steps:

Projection : each vertex of the triangle is projected from 3D space onto the camera’s 2D plane. This operation is performed by a matrix multiplication in a projective space: \(p' = M \, p\), where \(M\) is the matrix combining the transformation of the scene, the placement of the camera, and the perspective projection. This operation is extremely fast because it reduces to a matrix-vector product per vertex.

Rasterization : once the triangles are projected in 2D, each triangle is “filled” pixel by pixel. For each pixel covered by the triangle, its barycentric coordinates are computed and the attributes of the vertices (color, normal, texture coordinates, etc.) are interpolated to obtain the value at the pixel. Each such pixel is called a fragment.

These two steps are illustrated below.

Projection des sommets sur le plan caméra (gauche) et rasterization des triangles en pixels (droite).

A z-buffer mechanism (depth buffer) handles occlusion: for each pixel, we keep only the fragment closest to the camera, ensuring that objects in front occlude those behind.

Advantages :

Disadvantages :

Rasterization is the real-time rendering standard on GPUs. It is the approach used in all video games, interactive visualization applications and CAD software.

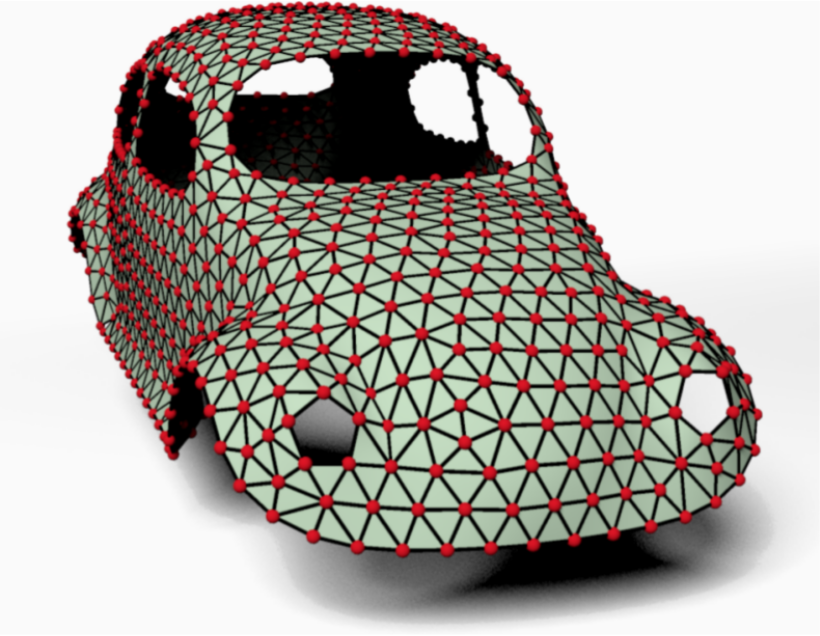

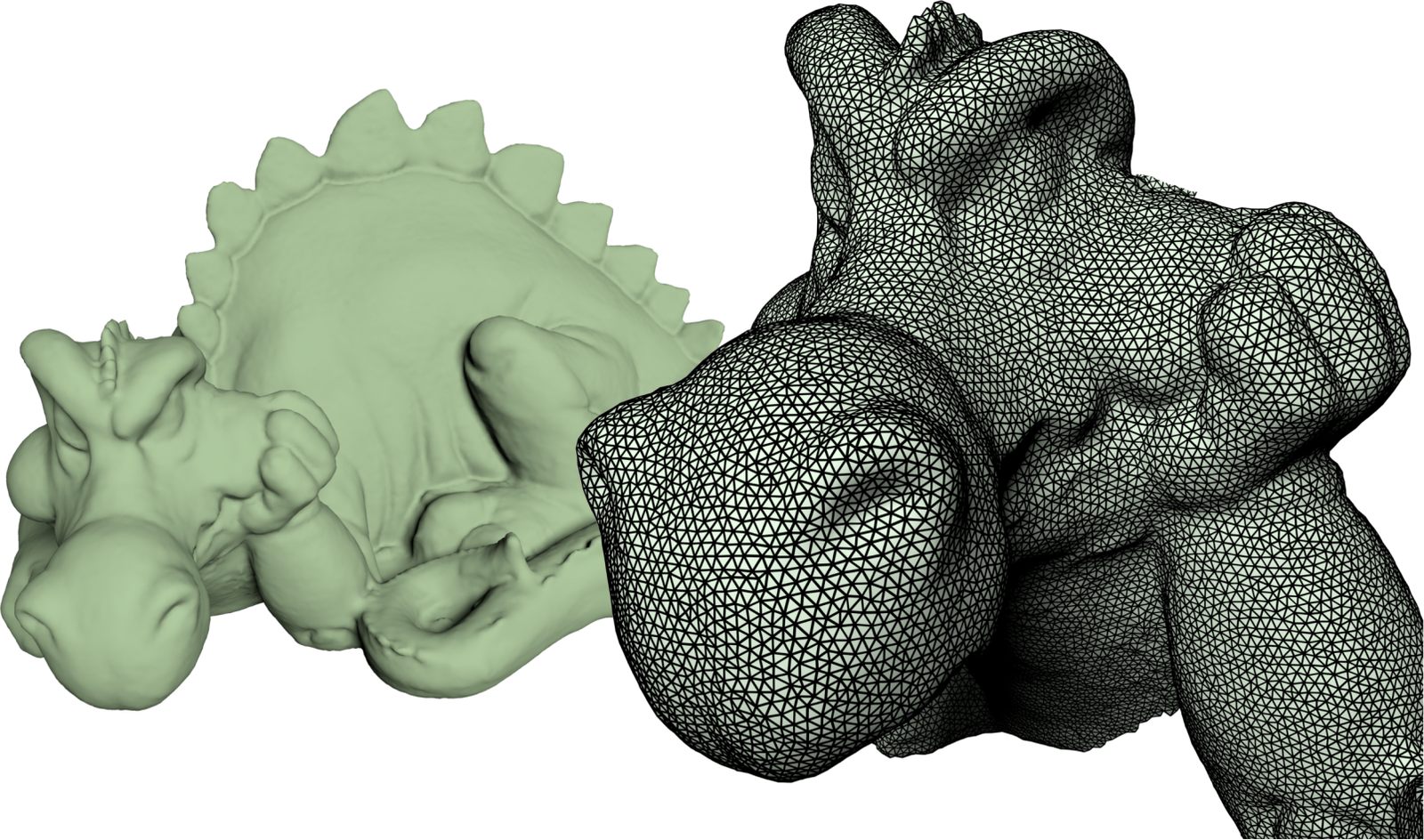

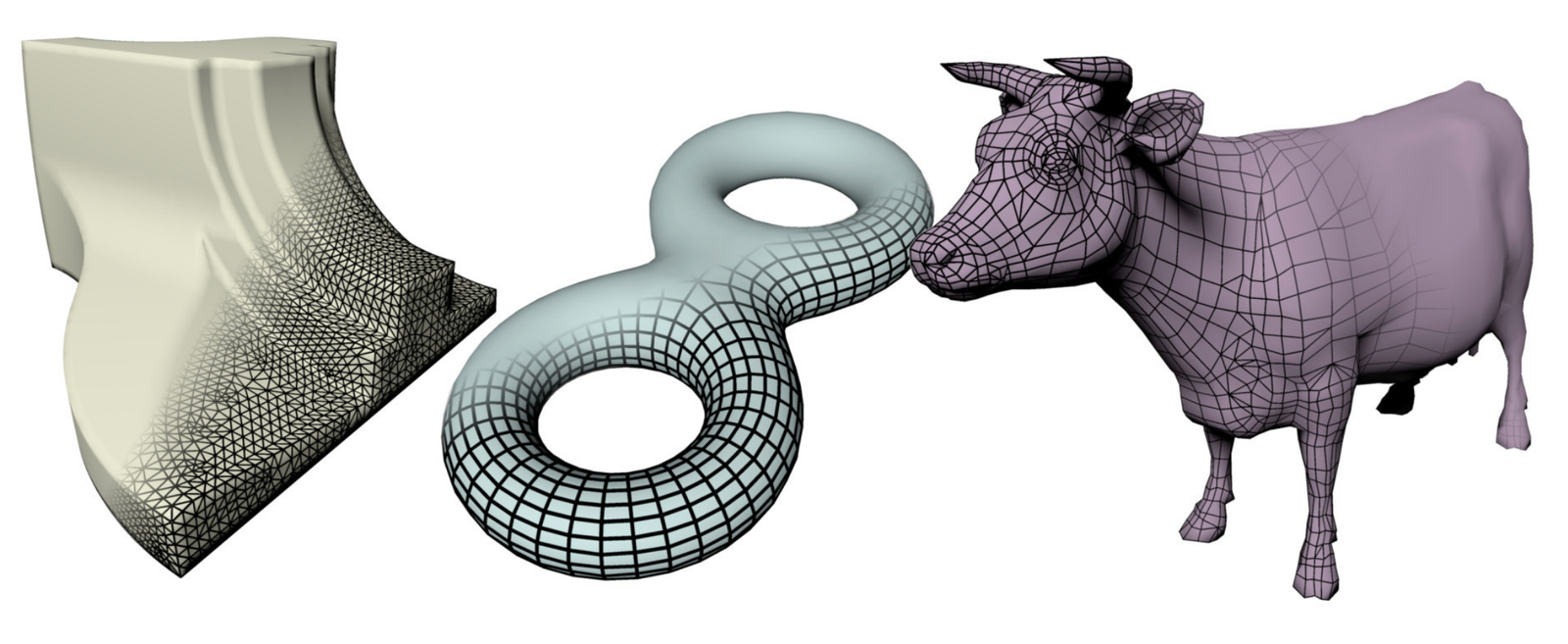

A triangular mesh is the simplest and most widespread representation to approximate a continuous surface by a set of triangles. The more triangles there are, the closer the approximation is to the original surface, but the higher the storage and rendering cost. In practice, a typical 3D model in a video game contains from a few thousand to several hundred thousand triangles. A high-resolution model for cinema can contain several million triangles.

A mesh is described by three types of elements :

Vertices (vertices) : the 3D points that form the

corners of the triangles. The set of vertices is denoted \(\mathcal{V} = (p_1, \dots, p_{N_v})\) with

\(p_i = (x_i, y_i, z_i) \in

\mathbb{R}^3\). Each vertex can carry additional attributes: a

normal (direction perpendicular to the surface at this point), a color,

texture coordinates, etc.

Faces : each face is a triangle defined by a triplet of

vertex indices \(\mathcal{F} = (f_1, \dots,

f_{N_f})\) with \(f_i = (p_{i_1},

p_{i_2}, p_{i_3})\). The faces define the

connectivity (or topology) of the mesh, i.e., how the

vertices are connected to each other.

Edges (optional) : segments connecting two adjacent

vertices \(\mathcal{E} = (e_1, \dots,

e_{N_e})\) with \(e_i = (p_{i_1},

p_{i_2})\). Edges are not always stored explicitly as they can be

inferred from the faces, but they are useful for certain algorithms

(subdivision, simplification, etc.).

Concrete examples of meshes are shown below.

Exemples de maillages triangulaires : modèle de voiture (gauche) et modèle de dragon (droite).

A triangle \(T\) is defined by three vertices \((p_1, p_2, p_3)\). The triangle is the basic element of rasterization and possesses very useful mathematical properties that we detail below.

Every point \(p\) inside the triangle can be expressed as a linear combination of the two edges emanating from \(p_1\):

\[ p \in T \Leftrightarrow S(u,v) = p_1 + u\,(p_2 - p_1) + v\,(p_3 - p_1), \quad u \geq 0, \; v \geq 0, \; u+v \leq 1 \] The parameters \((u,v)\) form a local coordinate system on the triangle. The vertex \(p_1\) corresponds to \((0,0)\), \(p_2\) to \((1,0)\) and \(p_3\) to \((0,1)\).

An equivalent and often more convenient way to parameterize a triangle is to use the barycentric coordinates \((\alpha, \beta, \gamma)\):

\[ p \in T \Leftrightarrow p = \alpha \, p_1 + \beta \, p_2 + \gamma \, p_3, \quad \alpha, \beta, \gamma \in [0,1], \; \alpha + \beta + \gamma = 1 \] Barycentric coordinates have an intuitive geometric interpretation: \(\alpha\) represents the “weight” or the influence of vertex \(p_1\) on the point \(p\). The closer \(p\) is to \(p_1\), the larger \(\alpha\) is (close to 1) and the smaller \(\beta\) and \(\gamma\) are. At the vertices, we have respectively \((\alpha, \beta, \gamma) = (1,0,0)\), \((0,1,0)\) and \((0,0,1)\). At the barycentre of the triangle, the three coordinates equal \(1/3\).

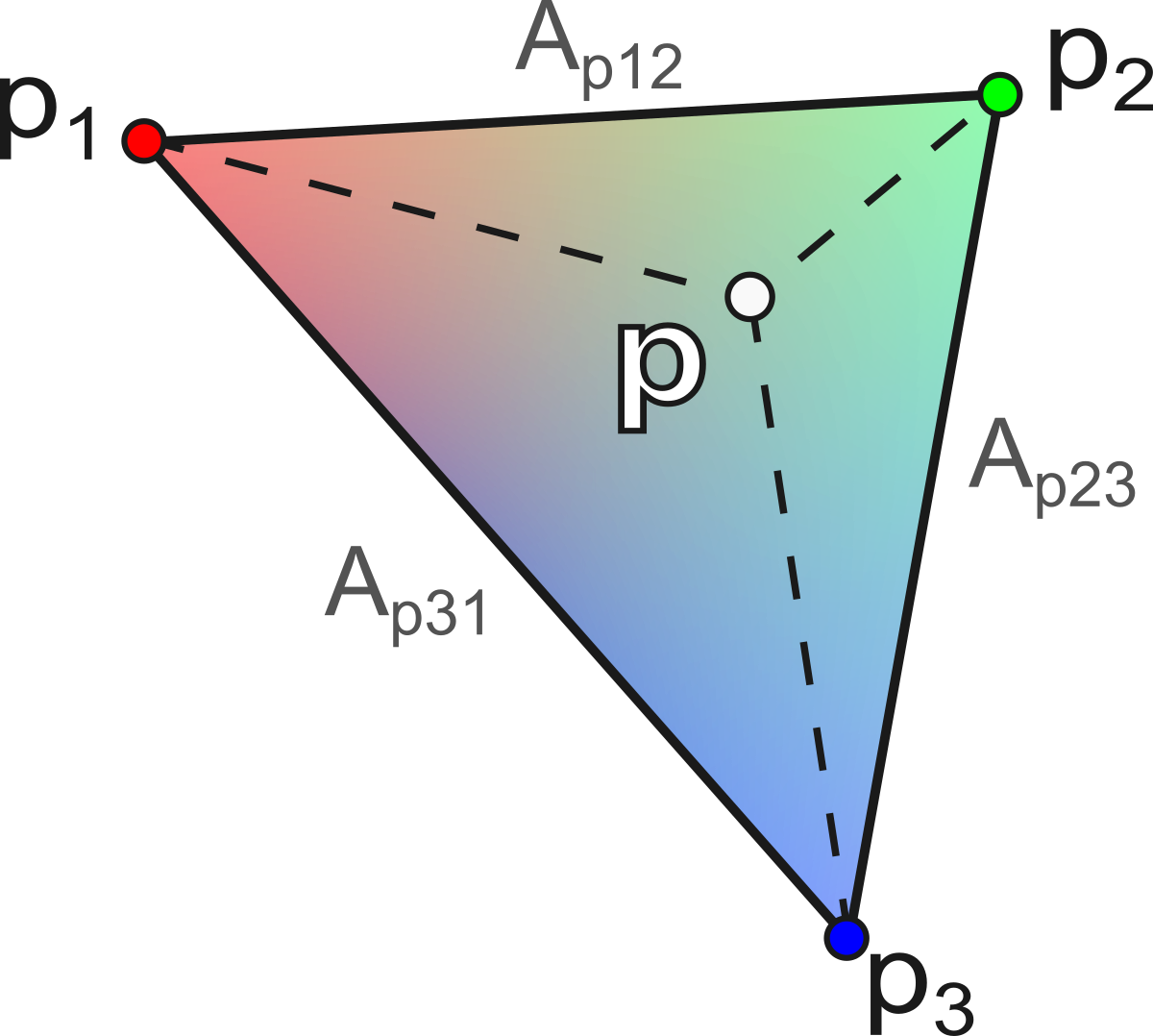

The calculation of barycentric coordinates for a point p is performed via the ratio of the areas of the sub-triangles:

\[ \alpha = \frac{A_{p23}}{A_{123}}, \quad \beta = \frac{A_{p31}}{A_{123}}, \quad \gamma = \frac{A_{p12}}{A_{123}} \] with \(A_{123} = \frac{1}{2} \| (p_2 - p_1) \times (p_3 - p_1) \|\) the total area of the triangle, and \(A_{p23}\), \(A_{p31}\), \(A_{p12}\) the areas of the sub-triangles formed by \(p\) with the opposite edges :

\[ \begin{aligned} A_{p23} &= \tfrac{1}{2} \| (p_2 - p) \times (p_3 - p) \| \\ A_{p31} &= \tfrac{1}{2} \| (p_3 - p) \times (p_1 - p) \| \\ A_{p12} &= \tfrac{1}{2} \| (p_1 - p) \times (p_2 - p) \| \end{aligned} \] This geometric construction is illustrated in the figure below.

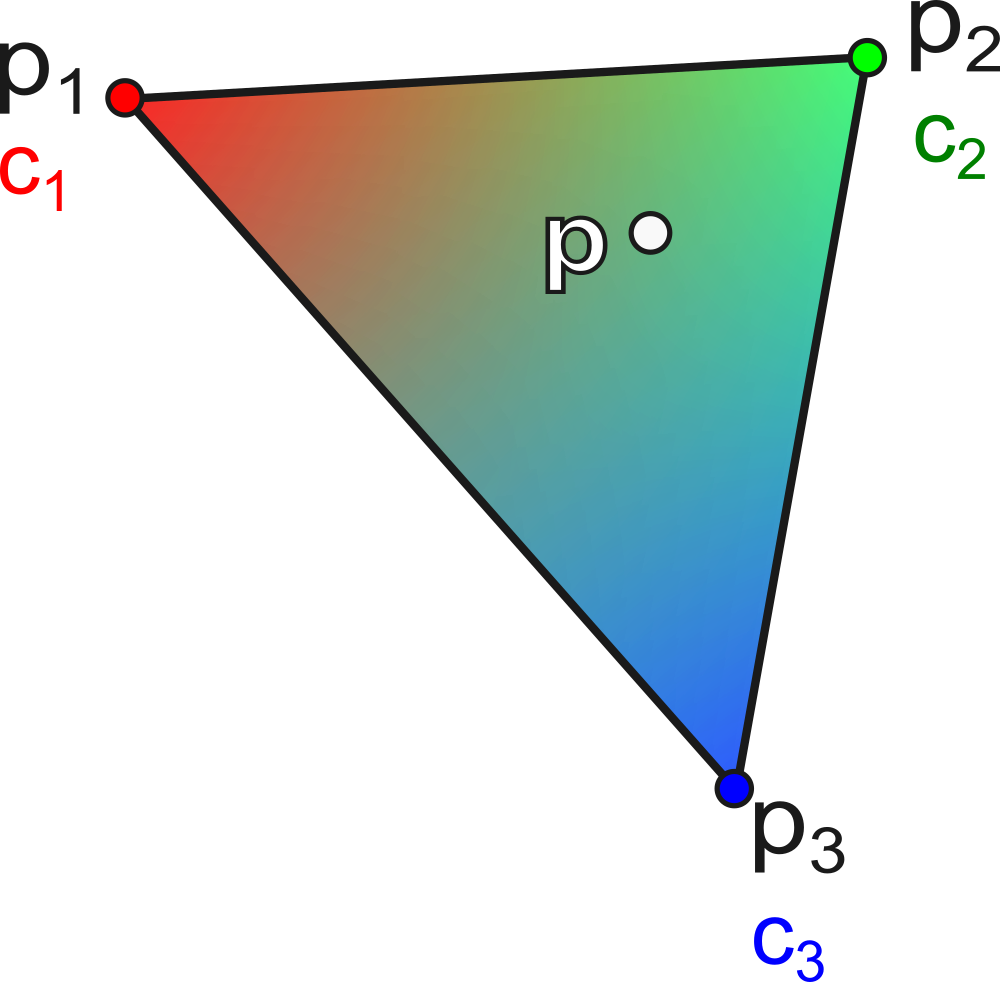

Let a triangle \(T\) with colors \((c_1, c_2, c_3)\) associated with the vertices \((p_1, p_2, p_3)\). The interpolated color at a point \(p\) inside the triangle is:

\[ c(p) = \alpha \, c_1 + \beta \, c_2 + \gamma \, c_3 \] where \((\alpha, \beta, \gamma)\) are the barycentric coordinates of \(p\).

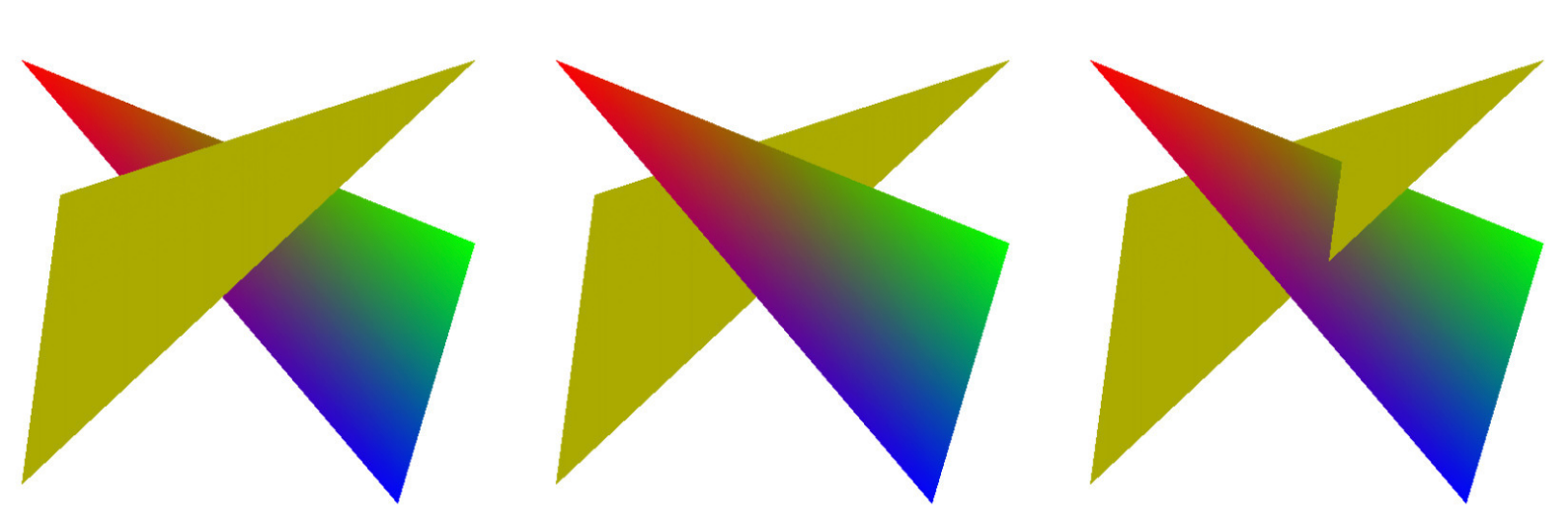

This interpolation is fundamental in rendering: it is used to interpolate colors, normals, texture coordinates, etc., inside each triangle. An example of color interpolation is shown below.

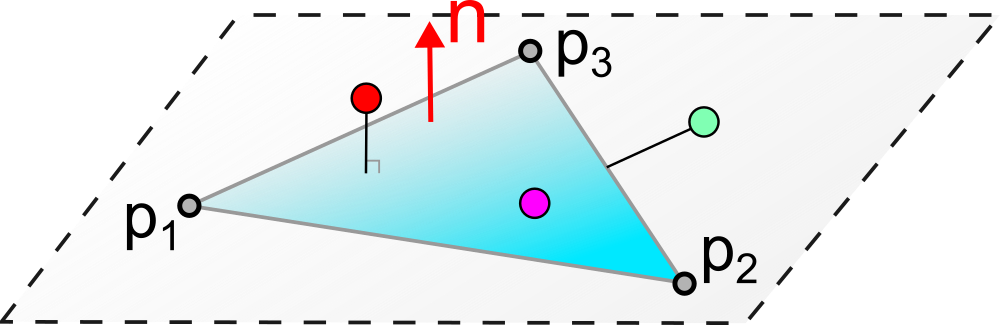

To test whether a point \(p\) is inside a triangle \(T = (p_1, p_2, p_3)\):

Necessary condition: \(p\) must lie in the plane of the triangle. We verify that \((p - p_1) \cdot n = 0\) with \(n = (p_2 - p_1) \times (p_3 - p_1)\) (normal to the triangle).

Sufficient condition: calculation of barycentric coordinates \((\alpha, \beta, \gamma)\). We verify that \(0 \leq \alpha, \beta, \gamma \leq 1\) and \(\alpha + \beta + \gamma = 1\).

The principle of this test is depicted in the following diagram.

Let us consider a tetrahedron \((p_0, p_1, p_2, p_3)\) as an example.

First solution: triangle soup

Each triangle is described by its three coordinates, with no sharing of vertices:

triangles = [(0.0,0.0,0.0), (1.0,0.0,0.0), (0.0,0.0,1.0),

(0.0,0.0,0.0), (0.0,0.0,1.0), (0.0,1.0,0.0),

(0.0,0.0,0.0), (0.0,1.0,0.0), (1.0,0.0,0.0),

(1.0,0.0,0.0), (0.0,1.0,0.0), (0.0,0.0,1.0)]2nd solution: geometry + connectivity (topology)

We separate the positions of the vertices and the indices of the faces:

geometry = [(0.0,0.0,0.0), (1.0,0.0,0.0), (0.0,1.0,0.0), (0.0,0.0,1.0)]

connectivity = [(0,1,3), (0,3,2), (0,2,1), (1,2,3)]This second approach is much more memory-efficient because the shared vertices are stored only once. In the tetrahedron example, the first solution stores \(4 \times 3 = 12\) coordinate triplets (i.e., 36 floating-point numbers), while the second stores only 4 vertices (12 floating-point numbers) plus 4 index triplets (12 integers). For large meshes, the gain is substantial. This is the standard representation used in practice and by graphics APIs (OpenGL, Vulkan, etc.).

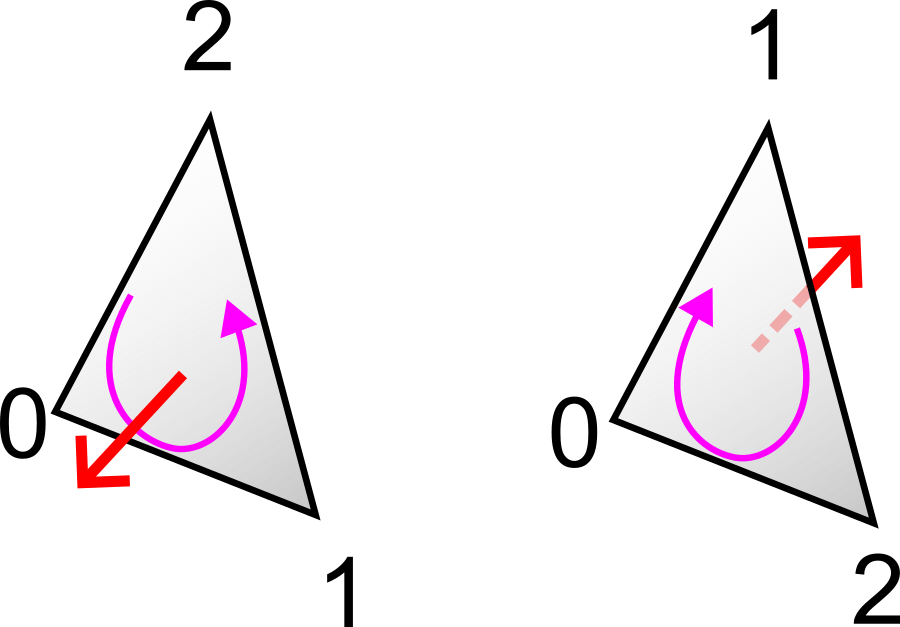

Note: the order of the indices in each face defines the face’s orientation. By convention (called the right-hand rule or counterclockwise convention), when viewing the face from outside the object, the vertices appear in counterclockwise order. This orientation enables the computation of the outward normal of the face by a cross product: \(n = (p_{i_2} - p_{i_1}) \times (p_{i_3} - p_{i_1})\). The GPU uses this information for the back-face culling: triangles viewed from behind (whose normal is oriented opposite to the camera) are not rendered, which reduces the rendering cost by about half. This convention is illustrated below.

The OBJ format (Wavefront) is one of the most widespread text formats for storing 3D meshes. Its simplicity makes it easy to read and write, as well by humans as by programs. An OBJ file is a text file where each line begins with a keyword followed by values:

v x y z: defines a vertex with its 3D

coordinates.vt u v: defines texture coordinates

(for mapping a texture onto the surface).vn x y z: defines a vertex normal

(direction perpendicular to the surface, used for shading).f v1 v2 v3: defines a triangular face

by the indices of its vertices. The indices start at 1

(and not 0). One can also reference normals and textures with the syntax

f v1/vt1/vn1 v2/vt2/vn2 v3/vt3/vn3.Minimal example (tetrahedron):```

v 0.0 0.0 0.0

v 1.0 0.0 0.0

v 0.0 1.0 0.0

v 0.0 0.0 1.0

f 1 2 4

f 1 4 3

f 1 3 2

f 2 3 4Affine transformations are the fundamental operations for positioning, orienting, and scaling objects in 3D space. In computer graphics, they are used continuously: to place an object in the scene, to animate a character (joint rotations), to position the camera, etc.

The translation moves a point by a constant vector \((t_x, t_y, t_z)\):

\[ t(p) = (x + t_x, \; y + t_y, \; z + t_z) \] Translation is not a linear transformation (it cannot be represented by multiplication by a matrix \(3 \times 3\), since \(t(0) \neq 0\) in general). This property gives rise to the need for homogeneous coordinates described later.

Scaling multiplies each coordinate by a scale factor:

\[ s(p) = (s_x \, x, \; s_y \, y, \; s_z \, z) \] In matrix notation :

\[ S = \begin{pmatrix} s_x & 0 & 0 \\ 0 & s_y & 0 \\ 0 & 0 & s_z \end{pmatrix} \] If \(s_x = s_y = s_z\), the scaling is uniform (the object preserves its proportions). Otherwise, it is non-uniform (the object is deformed, for example stretched along an axis).

A rotation preserves distances and angles (it is an isometry). It is described by an orthogonal \(3 \times 3\) matrix:

\[ R = \begin{pmatrix} a & b & c \\ d & e & f \\ g & h & j \end{pmatrix}, \quad R\,R^T = I \text{ et } \det(R) = 1 \] The columns (and rows) of \(R\) form an orthonormal basis: they are mutually orthogonal and of unit length. The condition \(\det(R) = 1\) distinguishes rotations from reflections (which have a determinant of \(-1\)).

There are several ways to parameterize a rotation in 3D: Euler angles (three successive angles around the axes), axis-angle (an axis and a rotation angle around that axis), or quaternions (a four-component representation, widely used in animation for its efficiency and absence of singularities).

Shearing shifts a coordinate in proportion to another. For example:

\[ sh_{xy}(p) = (x + \lambda \, y, \; y, \; z) \] In matrix notation :

\[ Sh_{xy} = \begin{pmatrix} 1 & \lambda & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 1 \end{pmatrix} \] Shearing preserves volumes (\(\det(Sh) = 1\)) but does not preserve angles (it is not an isometry). It is rarely used intentionally in computer graphics, but may appear as a by-product of certain matrix decompositions. Its geometric effect is visible in the following figure.

Rotation and scaling are linear transformations, representable by \(3 \times 3\) matrices. Translation, however, is a non-linear transformation that cannot be encoded directly by a \(3 \times 3\) matrix.

This poses a practical problem: in computer graphics, one continually chains rotations, scalings and translations to position objects in the scene. For example, to place an object in the world frame, one can apply a scaling (to adjust its size), then a rotation (to orient it), then a translation (to position it).

If only rotations and scalings were matrices, one would need to maintain two separate representations (one matrix for the linear part and a vector for the translation), which would considerably complicate the code.

Idea: add an extra coordinate to obtain a 4D unified representation, in which all affine transformations (including translation) are expressed as matrix products.

The unified affine transformation is then written as a matrix product \(4 \times 4\) :

\[ \begin{pmatrix} p' \\ 1 \end{pmatrix} = \begin{pmatrix} L & t \\ 0 & 1 \end{pmatrix} \begin{pmatrix} p \\ 1 \end{pmatrix} = \begin{pmatrix} L\,p + t \\ 1 \end{pmatrix} \] with \(L\) the linear part (3 × 3) and \(t\) the translation vector.

For a point \(p = (x, y)\), we add a coordinate: \(p = (x, y, 1)\).

Translation becomes a linear operation :

\[ \begin{pmatrix} x + t_x \\ y + t_y \\ 1 \end{pmatrix} = \begin{pmatrix} 1 & 0 & t_x \\ 0 & 1 & t_y \\ 0 & 0 & 1 \end{pmatrix} \begin{pmatrix} x \\ y \\ 1 \end{pmatrix} \] The same applies to rotations and scaling, which are expressed as matrices \(3 \times 3\) in 2D homogeneous coordinates.

In 3D, we use four-component vectors and matrices \(4 \times 4\).

Any sequence of translations, rotations and scalings can be factorized into a unique product of matrices:

\[ M = T_0 \, R_0 \, S_0 \, T_1 \, R_1 \, S_1 \, \dots \] The advantage is substantial: regardless of the complexity of the transformation chain, the result is always a single matrix \(4 \times 4\). Applying this transformation to a point amounts to a single matrix-vector multiplication. This property is massively exploited by the GPU, which can transform millions of vertices by applying the same matrix \(M\) to each of them in parallel.

[Attention]: the order of multiplications is important. Matrix transformations are not commutative in general: \(T \, R \neq R \, T\). By convention, the transformation on the far right is applied first. Thus, \(M = T \, R \, S\) means: first scaling, then rotation, then translation.

The advantage of generalized coordinates is the natural differentiation between points and vectors :

Point : \((x, y, z, \mathbf{1})\) — the translation is applied.

\[ M \begin{pmatrix} p \\ 1 \end{pmatrix} = \begin{pmatrix} L & t \\ 0 & 1 \end{pmatrix} \begin{pmatrix} p \\ 1 \end{pmatrix} = \begin{pmatrix} L\,p + t \\ 1 \end{pmatrix} \] Vector : \((x, y, z, \mathbf{0})\) — the translation does not apply.

\[ M \begin{pmatrix} v \\ 0 \end{pmatrix} = \begin{pmatrix} L & t \\ 0 & 1 \end{pmatrix} \begin{pmatrix} v \\ 0 \end{pmatrix} = \begin{pmatrix} L\,v \\ 0 \end{pmatrix} \] This behavior is consistent with geometric operations:

This distinction is summarized in the diagram below.

Let us consider the case of a generalized point with coordinate \(w \neq 1\): \(p_{4D} = (x, y, z, w)\).

The “renormalization” (or projection) onto the space of 3D points gives: \(p_{3D} = (x/w, y/w, z/w, 1)\).

Example :

The sum of two points yields:

\[ (x_1, y_1, z_1, 1) + (x_2, y_2, z_2, 1) = (x_1+x_2, \; y_1+y_2, \; z_1+z_2, \; 2) \] After homogenization :

\[ p_{3D} = \left(\frac{x_1+x_2}{2}, \; \frac{y_1+y_2}{2}, \; \frac{z_1+z_2}{2}, \; 1\right) \] What corresponds to the barycenter of the two points.

The advantage of projective space is to encode rational operations (such as perspective) via a simple matrix multiplication.

Perspective is modeled by division by depth.

In 2D (1D projection), for a point \((x, y)\) projected onto a focal plane at distance \(f\):

\[ y' = f \, \frac{y}{x} \] This nonlinear model is written linearly in homogeneous coordinates:

\[ \begin{pmatrix} f \, y \\ x \end{pmatrix} = \begin{pmatrix} 0 & f \\ 1 & 0 \end{pmatrix} \begin{pmatrix} x \\ y \end{pmatrix} \] After homogenization (division by the last component \(x\)), we indeed obtain \(y' = f\,y/x\).

The real points (2D or 3D) are those whose last component has the value \(w = 1\) (obtained after homogenization). The vectors correspond to \(w = 0\). This projection mechanism is represented in the following figure.

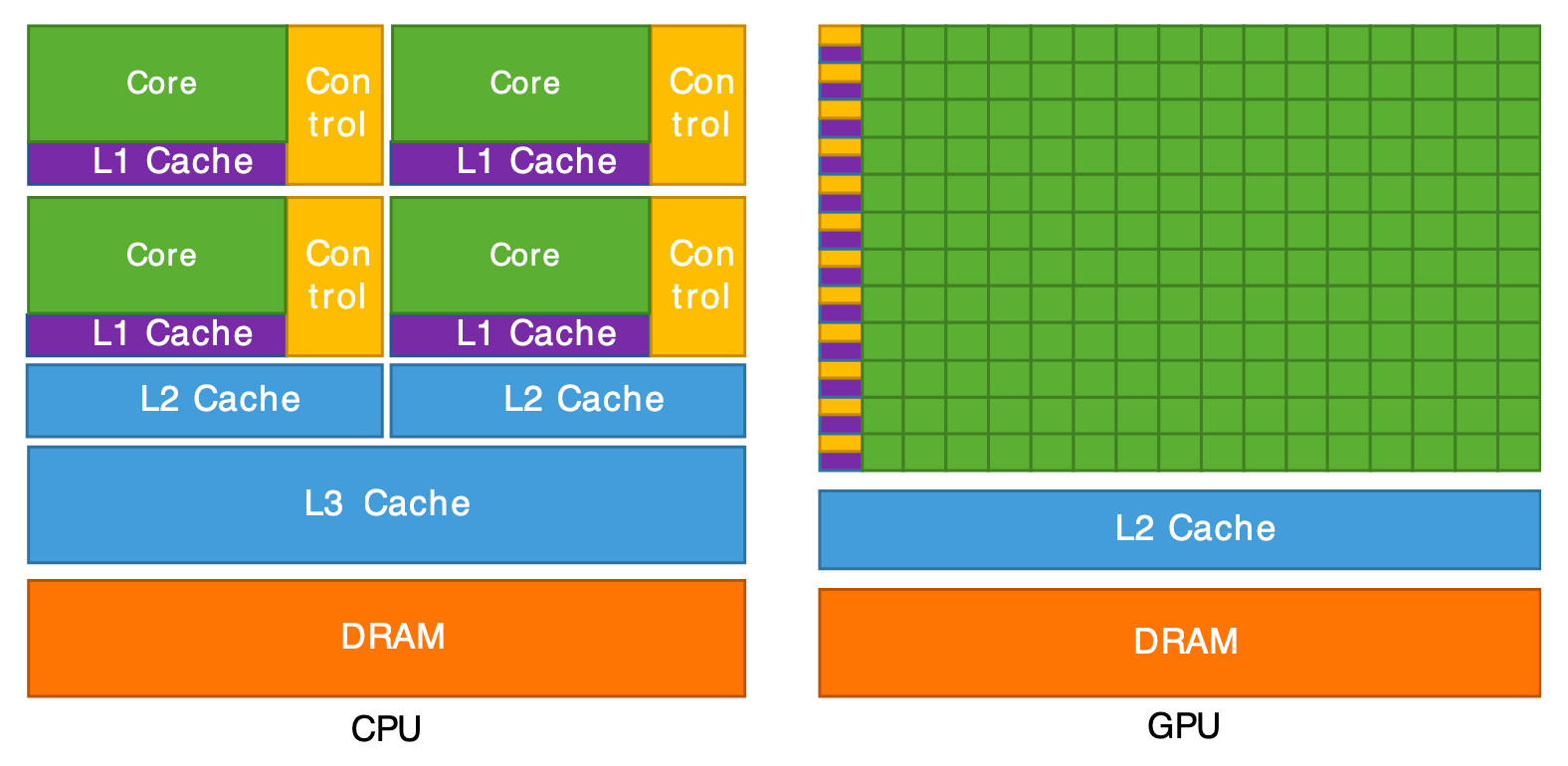

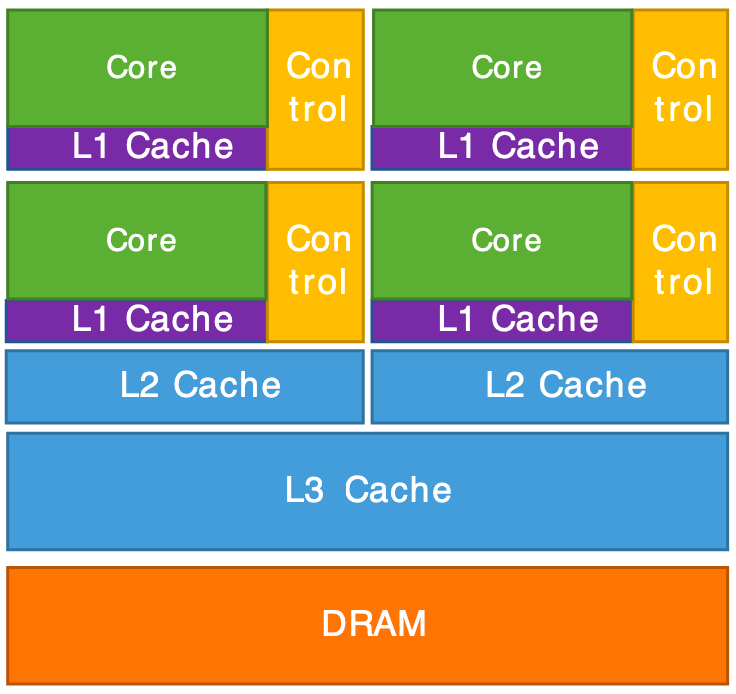

A computer has two main processors for computation:

The figures below show the physical aspect and the internal architecture of these two types of processors.

CPU (gauche) et GPU (droite).

Architecture interne d'un CPU (gauche) et d'un GPU (droite).

The GPU is, in summary, a supercomputer optimized to perform the same operation on a large amount of data in parallel. That’s exactly what we need for graphics rendering: to transform millions of vertices (vertex shader) and then color millions of pixels (fragment shader). The CPU, for its part, handles the program logic (scene management, data loading, user interactions) and sends the rendering commands to the GPU.

OpenGL (Open Graphics Library) is an API (Application Programming Interface) for communicating with the GPU, oriented toward 3D graphics.

Features:

Other graphics APIs: Vulkan, WebGL, WebGPU, DirectX (Windows), Metal (Mac).

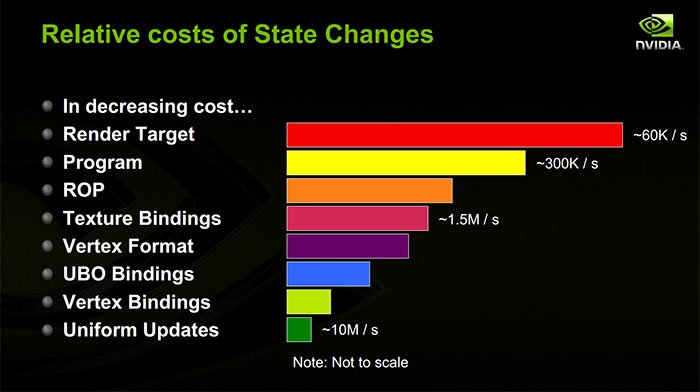

The CPU and the GPU each have their own memory (RAM and VRAM respectively) and cannot directly access each other’s memory. The rendering process therefore requires explicit communication between the two, orchestrated by the OpenGL API. This process follows three main stages:

Data preparation (CPU): the C++ program (running on the CPU) loads or computes the geometric data of the scene: vertex positions, colors, normals, texture coordinates, connectivity indices, etc. These data are organized into RAM arrays.

Sending data (CPU \(\rightarrow\) GPU): the data are transferred in blocks from RAM to VRAM via OpenGL calls. This transfer is relatively costly (the bus between CPU and GPU has limited bandwidth), which is why we aim to minimize it: ideally, static data (meshes that do not change) are sent only once at startup. Only the data that change per frame (transformation matrices, animation parameters) are transmitted per frame.

Shader Execution (GPU): once the data are in VRAM, the GPU executes shader programs in parallel on each vertex and then on each pixel. The CPU no longer intervenes during this phase: it simply issues the “draw” command and the GPU handles the rest autonomously. The result is the final image, stored in the framebuffer of VRAM, which is then displayed on the screen.

The following diagrams detail this communication pipeline.

The shaders are small programs that run directly on the GPU. They are written in a dedicated language called GLSL (OpenGL Shading Language), whose syntax is close to C. The term “shader” comes from “shading” (shading), because their initial role was to compute the shading of surfaces, but they are today used for all kinds of graphical calculations.

The fundamental peculiarity of shaders is that they are executed massively in parallel: the same program is launched simultaneously on thousands of vertices or pixels. The programmer writes the code for a single vertex or pixel, and the GPU takes care of executing it for all.

Two main types of shaders are involved in the rendering pipeline:

Executed once for each vertex of the mesh. Its main role is to transform the position of the vertex from the 3D space of the scene to the 2D space of the screen (by applying the transformation and projection matrices). It can also calculate and transmit attributes (colors, transformed normals, texture coordinates, etc.) that will be used by the fragment shader.

#version 330 core

layout(location = 0) in vec4 position;

layout(location = 1) in vec4 color;

out vec4 vertexColor;

void main()

{

gl_Position = position;

vertexColor = color;

}In this example :

#version 330 core : indicates the GLSL version used

(corresponding to OpenGL 3.3).layout(location = 0) in vec4 position : declares an

input attribute (the vertex position), received from data sent by the

CPU. The location = 0 indicates the index of the attribute

buffer.out vec4 vertexColor : declares an output variable that

will be passed to the fragment shader. Between the vertex shader and the

fragment shader, this variable will be automatically interpolated by

rasterization.gl_Position : a predefined special variable provided by

OpenGL, in which the vertex shader must write the final position of the

vertex (in clip coordinates).Executed once for each pixel (fragment) covered by a triangle after rasterization. Its role is to determine the final color of the pixel based on the interpolated attributes (color, normal, texture coordinates), lighting, textures, etc. It is in the fragment shader that we implement illumination models and visual effects.

#version 330 core

in vec4 vertexColor;

out vec4 fragColor;

void main()

{

fragColor = vertexColor;

}In this example:

in vec4 vertexColor : input variable coming from the

vertex shader. Its value has been automatically interpolated by

rasterization between the three vertices of the current triangle, using

the fragment’s barycentric coordinates.out vec4 fragColor : the output color of the fragment,

which will be written to the final image (framebuffer).In addition to attributes (per-vertex) and interpolated variables (per-fragment), shaders can receive uniform variables (uniform): constant values for all vertices or fragments in a single render call. They are defined on the CPU side and sent to the GPU. Typical examples are transformation matrices, the camera position, the light direction, the current time, etc.

uniform mat4 modelViewProjection; // matrice de transformation (4x4)

uniform vec3 lightDirection; // direction de la lumièreThe full pipeline works as follows:

The attributes transmitted from the vertex shader to the fragment

shader (such as vertexColor) are automatically

interpolated barycentrically between the triangle’s

vertices, which exactly corresponds to the barycentric interpolation

described above. The entirety of this pipeline is summarized in the

diagram below.

As seen in the previous chapter, a 3D scene is described by models (surfaces), light sources, and a camera. Rendering by rasterization yields a 2D image of this scene.

However, at the level of the GPU and OpenGL, there is no explicit notion of a camera or light. The GPU manipulates only vertices with their attributes (position, color, normal, etc.) as input to the vertex shader, and fragments (pixels) colored as output of the fragment shader. The programmer’s job is to translate high-level concepts (camera, light, materials) into operations on the vertices and fragments via shaders.

The rendering pipeline breaks down into three main stages. The first is the transformation of the vertices, performed by the vertex shader: each vertex is projected from the 3D space of the scene to the 2D space of the screen by applying the transformation and projection matrices. The second stage is the rasterization: the triangles projected in 2D are converted into fragments (pixels) by an automatic discretization process. Finally, the third stage is the color calculation, performed by the fragment shader: for each fragment, its final color is determined based on lighting, the material and the textures.

OpenGL only draws triangles whose vertices lie inside the cube \([-1, 1]^3\). This normalized space is called Normalized Device Coordinates (NDC). The first two components \((x_{\text{ndc}}, y_{\text{ndc}})\) correspond to the screen coordinates, whereas \(z_{\text{ndc}}\) encodes the depth, i.e., the distance to the camera in image space. This normalized cube is depicted in the following figure.

The goal of projection is to convert the global coordinates (world space) to NDC coordinates. This conversion must, on the one hand, define a camera model that specifies which part of 3D space is visible, and on the other hand apply the perspective so that distant objects appear smaller than nearby objects.

The most common perspective model uses a viewing volume in the shape of a pyramid frustum (frustum), delimited by a near plane (\(z_{\text{near}}\)) and a far plane (\(z_{\text{far}}\)), as illustrated below.

Let a point with coordinates \((x, y, z)\) in world space. The NDC coordinates \((x_{\text{ndc}}, y_{\text{ndc}}, z_{\text{ndc}})\) are computed as follows:

Coordinates \(x\) and \(y\):

\[ x_{\text{ndc}} = \frac{z_{\text{near}}}{-z} \cdot \frac{x}{w} = \frac{1}{\tan(\theta/2)} \cdot \frac{x}{-z} \]

\[ y_{\text{ndc}} = \frac{z_{\text{near}}}{-z} \cdot \frac{y}{h} = \frac{r}{\tan(\theta/2)} \cdot \frac{y}{-z} \] where \(\theta\) is the field of view (Field of View, FOV) and \(r = h/w\) is the aspect ratio.

Division by \(-z\) produces the perspective effect: distant objects (large \(|z|\)) are reduced in size.

Z coordinate \(z\) (depth) :

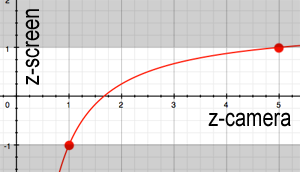

The NDC depth is a non-linear function of \(z\) :

\[ z_{\text{ndc}} = \frac{1}{-z} \left( -\frac{z_{\text{far}} + z_{\text{near}}}{z_{\text{far}} - z_{\text{near}}} \cdot z - \frac{2 \, z_{\text{near}} \, z_{\text{far}}}{z_{\text{far}} - z_{\text{near}}} \right) \] with the correspondences: \(z = -z_{\text{near}} \rightarrow z_{\text{ndc}} = -1\) and \(z = -z_{\text{far}} \rightarrow z_{\text{ndc}} = 1\).

The variation in \(1/z\) implies a finer precision near the camera and coarser as the distance increases. This is a deliberate choice: nearby objects require better depth resolution to avoid visual artifacts.

In homogeneous coordinates, the projection is expressed as a \(4 \times 4\) matrix product:

\[ p_{\text{ndc}} = \text{Proj} \times p \] with :

\[ \text{Proj} = \begin{pmatrix} \frac{1}{\tan(\theta/2)} & 0 & 0 & 0 \\ 0 & \frac{r}{\tan(\theta/2)} & 0 & 0 \\ 0 & 0 & -\frac{z_{\text{far}} + z_{\text{near}}}{z_{\text{far}} - z_{\text{near}}} & -\frac{2 \, z_{\text{near}} \, z_{\text{far}}}{z_{\text{far}} - z_{\text{near}}} \\ 0 & 0 & -1 & 0 \end{pmatrix} \] After multiplication, the obtained vector has a component \(w \neq 1\). The homogenization (division by \(w\)) produces the final NDC coordinates. This is exactly the mechanism of projective space seen in the previous chapter.

The result is a deformed space inside the cube \([-1, 1]^3\). The final image corresponds to the view \((x_{\text{ndc}}, y_{\text{ndc}})\) of this cube, while \(z_{\text{ndc}}\) represents the depth seen from the camera in image space. Everything outside the cube \([-1, 1]^3\) is automatically discarded by the GPU (clipping).

The variation in \(1/z\) of the NDC depth has an important consequence: the precision depends strongly on the choice of \(z_{\text{near}}\). The following diagram shows this nonuniform distribution of precision.

If \(z_{\text{near}}\) is too close to 0, all precision is concentrated on distances very close to the camera. Distant objects end up with depth values almost identical after discretization. This causes a visual artifact called z-fighting (or depth-fighting): two surfaces close in depth “flicker” randomly because the GPU cannot determine which is in front. The graph below illustrates the curve of \(z_{\text{ndc}}\) as a function of \(z\).

Practical rule: choose \(z_{\text{near}}\) as large as possible (while keeping objects visible in the frustum) to maximize depth precision across the entire scene.

The view matrix transforms coordinates from world space to camera space (view space). It positions and orients the camera in the scene, according to the principle illustrated below.

The camera is described by a \(4 \times 4\) matrix containing its local axes and its position:

\[ \text{Cam} = \begin{pmatrix} \text{right}_x & \text{up}_x & \text{back}_x & \text{eye}_x \\ \text{right}_y & \text{up}_y & \text{back}_y & \text{eye}_y \\ \text{right}_z & \text{up}_z & \text{back}_z & \text{eye}_z \\ 0 & 0 & 0 & 1 \end{pmatrix} = \begin{pmatrix} O & \text{eye} \\ 0 & 1 \end{pmatrix} \] where \(O = (\text{right}, \text{up}, \text{back})\) is the camera rotation matrix (orthonormal basis) and \(\text{eye}\) is its position in the world.

The view matrix is the inverse of the camera matrix:

\[ \text{View} = \text{Cam}^{-1} = \begin{pmatrix} O^T & -O^T \cdot \text{eye} \\ 0 & 1 \end{pmatrix} \] This inversion is very efficient to compute because \(O\) is orthogonal (\(O^{-1} = O^T\)). The view matrix maps the camera position to the origin and aligns its axes with the axes of the frame.

The model matrix positions an object in the world. It transforms the object’s local coordinates to the world space coordinates:

\[ \text{Model} = \begin{pmatrix} R & t \\ 0 & 1 \end{pmatrix} \] where \(R\) is the rotation matrix (orientation of the object) and \(t\) is the translation vector (position of the object). The following figure shows this passage from local coordinates to world space.

The importance of separating an object’s geometry from its position is fundamental. The object’s geometric coordinates (mesh) are loaded only once into VRAM, and to move or orient the object, it suffices to modify the Model matrix, namely a simple matrix \(4 \times 4\) of 16 floats. This separation also enables instancing: the same mesh can be drawn at several locations in the scene by applying different Model matrices, without duplicating the geometric data in memory. For example, a forest consisting of thousands of trees may store only a single tree mesh, each instance having its own Model matrix (position, rotation, scale).

The complete transformation of a vertex, from its local coordinates to NDC space, is the composition of the three matrices:

\[ p_{\text{ndc}} = \text{Proj} \times \text{View} \times \text{Model} \times p \] The vertex starts from its local coordinates \(p = (x, y, z, 1)\) in the object space, where the geometry is defined relative to the object’s origin. The Model matrix places it in the world space: \(p_{\text{world}} = \text{Model} \times p\), applying the object’s rotation, scale, and translation. The View matrix then transforms this position into the camera space: \(p_{\text{view}} = \text{View} \times p_{\text{world}}\), where the camera is at the origin and looks in the direction \(-z\). Finally, the Projection matrix converts the coordinates to NDC: \(p_{\text{ndc}} = \text{Proj} \times p_{\text{view}}\), applying perspective and normalizing the coordinates into the cube \([-1, 1]^3\) after homogenization (division by \(w\)).

This chain constitutes the first stage of the graphics pipeline, performed by the vertex shader.

#version 330 core

layout (location = 0) in vec3 vertex_position;

uniform mat4 model;

uniform mat4 view;

uniform mat4 projection;

void main() {

gl_Position = projection * view * model * vec4(vertex_position, 1.0);

}The three matrices are sent to the GPU as uniform variables from the C++ code. The GPU then applies this transformation in parallel on all vertices. This is much more efficient than computing the new positions on the CPU: instead of transferring \(N\) modified positions per frame, we transfer only three matrices \(4 \times 4\) (i.e., 48 floats).

void main_loop() {

// Mise à jour des matrices

glUniform(shader, Model);

glUniform(shader, View);

glUniform(shader, Projection);

draw(mesh_drawable);

}The rasterization (or rasterisation, or facetization) is the conversion of vector data (triangles defined by vertices) into discrete elements: des? Wait—this must be translated consistently.

The conversion of vector data (triangles defined by vertices) into discrete elements: pixels (or fragments). It is an automatic and non-programmable step in the OpenGL pipeline.

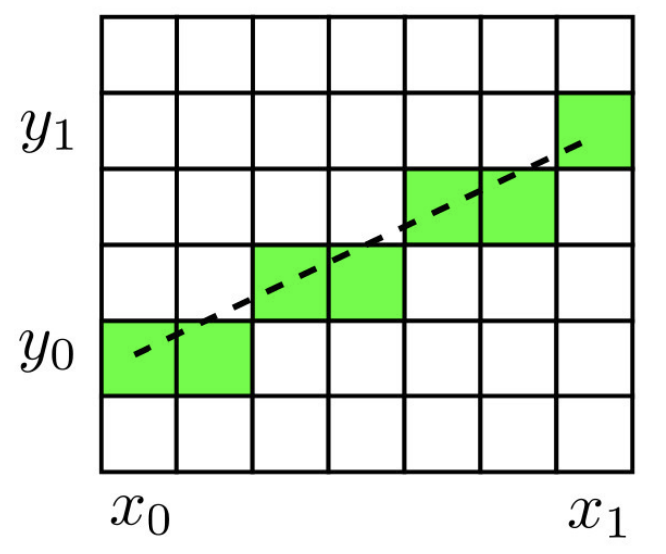

The fundamental operation is the following: given a triangle defined by three 2D points (after projection), determine the set of pixels it covers, as can be seen in the following figure.

Before tackling the rasterization of triangles, consider simpler primitives to understand the principle of discretization.

Point : a single pixel.

im(x0, y0) = c;Rectangle : two nested loops.

for(int kx = x0; kx < x1; kx++)

for(int ky = y0; ky < y1; ky++)

im(kx, ky) = c;Horizontal, vertical or diagonal segment: a single loop suffices.

// Segment horizontal

for(int kx = x0; kx < x1; kx++)

im(kx, y0) = c;For an arbitrary segment, the discretization is no longer trivial: several pixelated approximations are possible, as shown in the image below. One must choose an efficient and deterministic algorithm.

The Bresenham’s algorithm is a classic and very efficient algorithm for rasterizing a segment. It relies solely on integer operations, which makes it fast and accurate.

Principle: we move along the x-axis pixel by pixel. At each step, we evaluate whether the accumulated error in \(y\) exceeds \(0.5\) :

The progression of the algorithm is visualized in the following figure.

int dx = x1 - x0, dy = y1 - y0;

float a = float(dy) / float(dx);

float erreur = 0.0f;

int y = y0;

for(int x = x0; x <= x1; x++)

{

im(x, y) = c;

erreur += a;

if(erreur >= 0.5f)

{

y = y + 1;

erreur = erreur - 1.0f;

}

}Note: by multiplying all values by \(2 \, dx\), one can eliminate divisions and floating-point operations to obtain a purely integer algorithm. The algorithm above handles the case \(0 \leq dy \leq dx\); the other octants are treated by symmetry.

The rasterization of a triangle \((p_0, p_1, p_2)\) is performed by the scanline algorithm:

Discretize the edges: for each edge of the triangle (\((p_0, p_1)\), \((p_1, p_2)\), \((p_2, p_0)\)), apply the Bresenham algorithm to obtain the boundary pixels.

Store the horizontal bounds: for each line \(y\), store the values \(x_{\min}\) and \(x_{\max}\) of the boundary pixels.

Fill line by line: for each line \(y\), color all the pixels from \(x_{\min}\) to \(x_{\max}\).

The two steps are illustrated below: edge discretization and filling.

Rasterization d'un triangle par scanline : discrétisation des arêtes (gauche) et remplissage horizontal (droite).

Example of a scanline table for a triangle :

| \(y\) | \(x_{\min}\) | \(x_{\max}\) |

|---|---|---|

| 0 | 3 | 5 |

| 1 | 0 | 5 |

| 2 | 1 | 4 |

| 3 | 2 | 4 |

| 4 | 3 | 3 |

During rasterization, the barycentric coordinates of each fragment are computed to interpolate the vertex attributes (color, normal, texture coordinates, depth) linearly inside the triangle.

The Phong model is the most classic illumination model in real-time rendering. It rests on the observation that the appearance of a lit surface can be decomposed into three distinct physical phenomena. The first is the ambient component, which represents a uniform base color, independent of geometry and light position: it roughly models the indirect light that bathes the entire scene. The second is the diffuse component, which depends on the local orientation of the surface relative to the light: a surface facing the light is bright, a surface facing the other way is dark. The third is the specular component, which models the shiny reflection of the light source visible on smooth surfaces, and which depends on the observer’s viewpoint. These three contributions are represented on the following figure.

The final color of a point is the sum of these three components:

\[ C = C_a + C_d + C_s \] The calculation of each component involves two color properties: the color of the light source \(C_\ell = (r_\ell, g_\ell, b_\ell) \in [0, 1]^3\) and the color of the surface (or albedo) \(C_o = (r_o, g_o, b_o) \in [0, 1]^3\). Their component-wise multiplication (\((r_\ell \cdot r_o, \, g_\ell \cdot g_o, \, b_\ell \cdot b_o)\)) models the filtering of light by the material: a red surface illuminated by white light appears red, while the same surface illuminated by a green light appears black (because the red component of the light is zero).

The ambient component models a uniform illumination of the scene (approximate indirect lighting). It does not depend on either the position or the orientation of the surface:

\[ C_a = \alpha_a \, C_\ell \, C_o \] with \(\alpha_a \in [0, 1]\) the ambient coefficient. This term prevents surfaces that are not directly illuminated from being completely black.

The diffuse component models the light reflected uniformly in all directions by a matte surface (Lambertian reflection). It depends on the angle between the normal \(n\) to the surface and the direction of the light \(u_\ell\):

\[ C_d = \alpha_d \, (n \cdot u_\ell)_+ \, C_\ell \, C_o \] The coefficient \(\alpha_d \in [0, 1]\) controls the diffuse reflection intensity of the material. The central term is the diffuse factor \((n \cdot u_\ell)_+ = \max(n \cdot u_\ell, \, 0)\), which is the dot product between the unit normal vector \(n\) to the surface and the unit direction \(u_\ell\) from the point toward the light source. This dot product measures the cosine of the angle between the normal and the direction of the light. When the surface faces the light directly (\(n\) parallel to \(u_\ell\)), the factor equals 1 and the illumination is maximal. When the surface is grazing (\(n\) perpendicular to \(u_\ell\)), the factor equals 0. When the surface is turned opposite to the light, the dot product is negative, and the truncation \(\max(\cdot, 0)\) forces the contribution to zero, avoiding physically absurd lighting.

This geometric mechanism is schematically illustrated below.

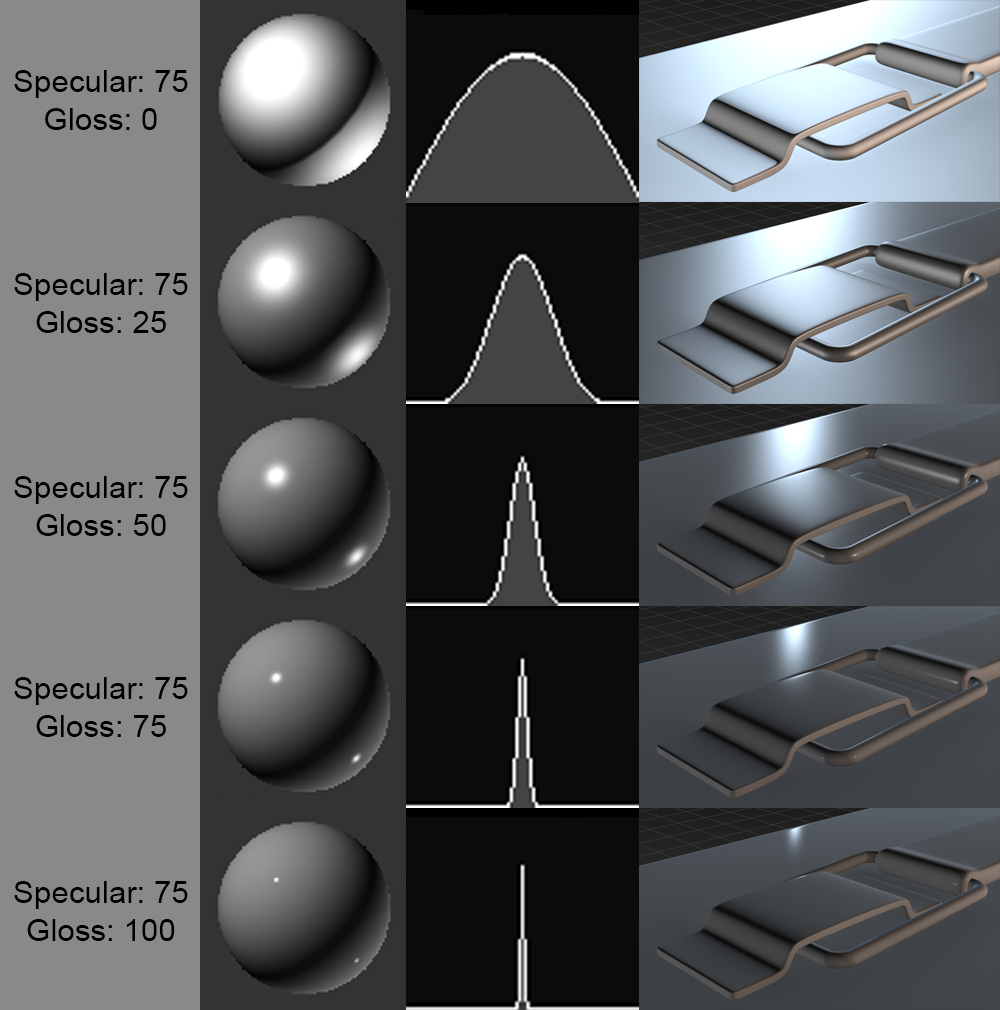

The specular component models the reflection of the light source on the surface. Unlike the diffuse component, it depends on the camera’s viewpoint:

\[ C_s = \alpha_s \, (u_r \cdot u_v)_+^{s_{\exp}} \, C_\ell \] The coefficient \(\alpha_s \in [0, 1]\) controls the intensity of the specular reflection. The shininess exponent \(s_{\exp}\) (typically between 64 and 256) determines the size of the highlight: the higher it is, the more the highlight is concentrated in a narrow and sharp spot (very polished surface), while a low exponent produces a broad and diffuse highlight (slightly rough surface).

The vector \(u_r\) is the

direction of reflection of the light with respect to

the normal, calculated by the formula \(u_r =

2 \, (u_\ell \cdot n) \, n - u_\ell\) (in GLSL:

reflect(-u_l, n)). The vector \(u_v\) is the unit direction from the

surface point toward the camera. The dot product \((u_r \cdot u_v)\) measures the alignment

between the reflection direction and the viewing direction: the

highlight is maximal when the observer lies exactly in the mirror

reflection direction of the light.

The specular component does not depend on the color of the surface \(C_o\) but only on \(C_\ell\), which corresponds to the physical behavior of reflections on dielectric materials (plastic, ceramic, etc.) : the reflection of white light on a red surface remains white. The geometry of the specular reflection and the influence of the shininess exponent are detailed in the following figures.

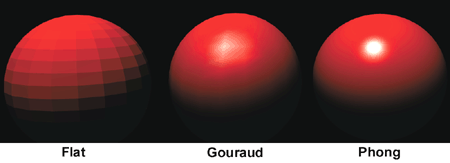

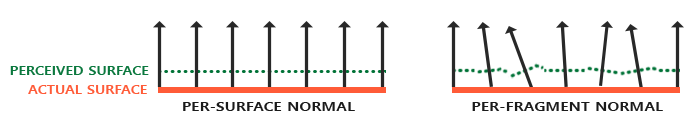

The Phong model defines the illumination formula at a point on the surface, but the question remains where and how often this formula is evaluated. Three classic strategies exist, with a trade-off between computational cost and visual quality.

The flat shading is the simplest approach: the color is computed once per triangle, using the geometric normal of the face. All pixels of the triangle receive exactly the same color. The result has a faceted appearance where every triangle is clearly visible, which may be acceptable for non-photorealistic rendering but is unsuitable for smooth surfaces.

The Gouraud shading improves quality by computing the illumination at the vertices of the triangle (in the vertex shader), then by linearly interpolating the resulting color inside the triangle during rasterization. The color transitions between adjacent triangles become smooth, giving the appearance of a smooth surface. However, this approach presents an important drawback: if a specular highlight falls in the middle of a large triangle, far from any vertex, it will be completely missed because no vertex has “seen” the reflection.

The Phong shading solves this problem by interpolating not the color, but the normal between the triangle’s vertices. The illumination is then calculated for each fragment (in the fragment shader) with this interpolated normal. The specular reflection is thus correctly computed at every point on the surface, even at the center of a large triangle. The visual difference among these three approaches is shown in the following figure.

In practice, the Phong shading is the standard. The overhead relative to Gouraud shading is negligible on modern GPUs (the fragment shader performs a few additional operations per pixel, but the GPU has thousands of cores to execute them in parallel), and the visual quality is significantly higher.

The Phong model naturally extends to multiple light sources by summing the contributions of each light:

\[ C = \sum_i \left( \alpha_a \, C_{\ell_i} \, C_o + \alpha_d \, (n \cdot u_{\ell_i})_+ \, C_{\ell_i} \, C_o + \alpha_s \, (u_{r_i} \cdot u_v)_+^{s_{\exp}} \, C_{\ell_i} \right) \] #### Distance attenuation

In physical reality, the light intensity of a point light source decreases proportionally to the inverse square of the distance (\(1/r^2\)). In real-time rendering, one often uses a simplified attenuation model that decreases linearly up to a maximum distance, beyond which the light has no effect:

\[ C_\ell(p) = \left(1 - \min\left(\frac{\|p - p_\ell\|}{d_{\text{att}}}, \, 1\right)\right) \, C_\ell^0 \] The vector \(p\) is the position of the point on the surface, \(p_\ell\) the position of the light, \(d_{\text{att}}\) the characteristic attenuation distance (beyond which the contribution is zero), and \(C_\ell^0\) the color of the light at the source. This linear model is less realistic than the \(1/r^2\) law, but it has the advantage of guaranteeing that the light dies out completely beyond \(d_{\text{att}}\), which allows rendering to be optimized by ignoring lights that are too far away.

An atmospheric fog effect simulates the absorption and scattering of light by the atmosphere. The principle is to progressively blend the calculated color with a uniform fog color as a function of the distance to the camera:

\[ C_{\text{final}} = (1 - \gamma(p)) \, C + \gamma(p) \, C_{\text{fog}} \] The fog blending factor \(\gamma(p) = \min( \|p - p_{\text{eye}}\| / d_{\text{fog}}, 1 )\) varies from 0 (near object, color unchanged) to 1 (far object, completely immersed in fog). The parameter \(d_{\text{fog}}\) controls the distance at which the fog becomes completely opaque, and \(C_{\text{fog}}\) is the color of the fog (often a light gray or the color of the sky). In practice, this effect provides a sense of atmospheric depth and naturally masks the far clipping plane (\(z_{\text{far}}\)), avoiding the abrupt disappearance of objects at the horizon.

The toon shading (or cel shading) is a non-photorealistic effect that gives a cartoon-like appearance. The principle is to discretize the color values into steps:

\[ C_{\text{toon}} = \frac{\text{floor}(C \times N)}{N} \] where \(N\) is the number of desired levels. This subsampling creates discrete color bands characteristic of the cartoon style, of which an example is shown below.

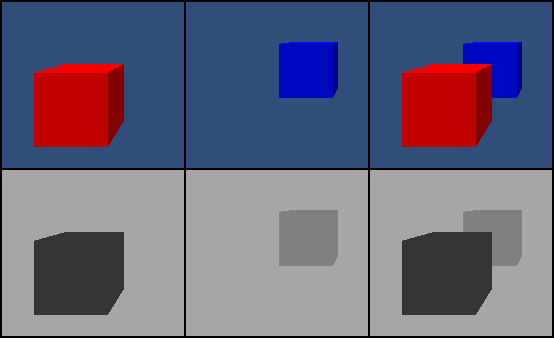

When several triangles overlap on the screen, it is necessary to determine which one is visible for each pixel. Without an occlusion mechanism, the order in which the triangles are rendered determines the result, which is incorrect, as demonstrated by the following image.

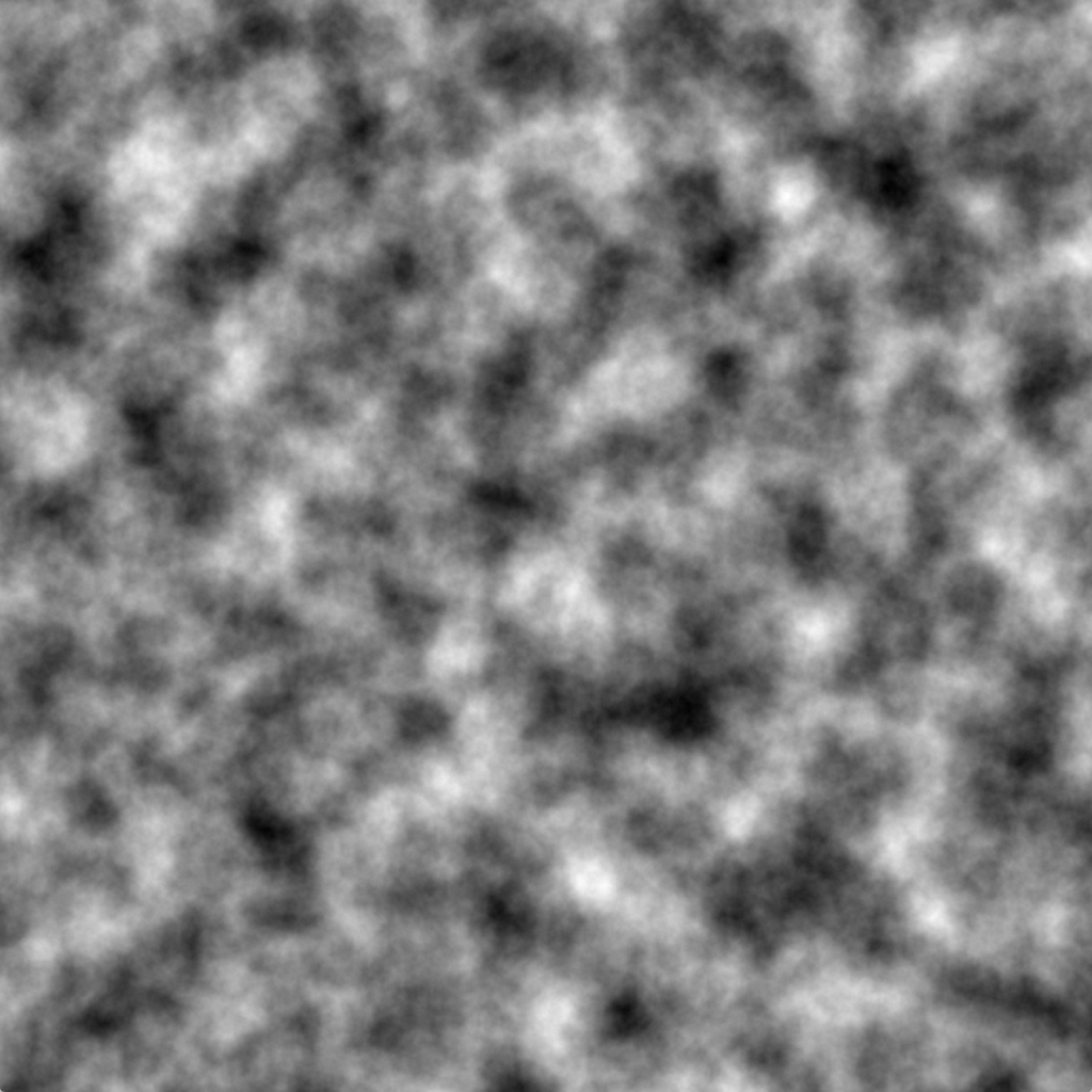

The Z-buffer (or depth buffer) is an auxiliary image of the same resolution as the final image, which stores the depth value \(z_{\text{ndc}}\) of the fragment closest to the camera for each pixel.

The algorithm is simple: for each fragment to be drawn, we compare its depth with the value stored in the Z-buffer:

def draw(position, couleur, z, image, zbuffer):

if z < zbuffer(position):

image(position) = couleur

zbuffer(position) = z

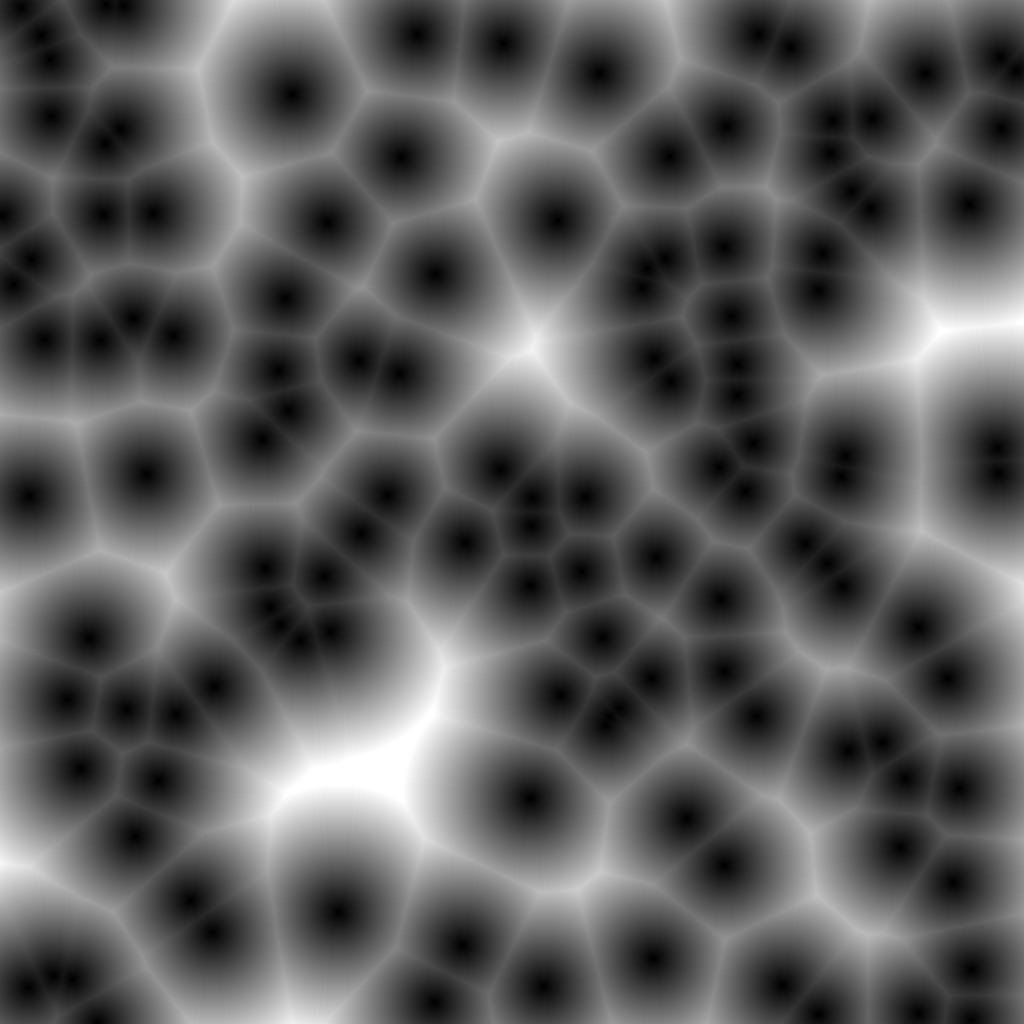

# sinon: ne rien dessiner (fragment masqué)The contents of the Z-buffer can be visualized as a grayscale image.

The Z-buffer is initialized to the maximum depth value (1.0, corresponding to the far plane) at the start of every frame. Each fragment updates the Z-buffer if its depth is smaller (closer to the camera) than the stored value. This ensures that only the closest fragment is drawn, independently of the drawing order of the triangles.

This step is performed automatically by the GPU (not programmable),

provided that depth testing is enabled

(glEnable(GL_DEPTH_TEST) in OpenGL).

The complete rendering pipeline chains together several steps in sequence. The vertex shader first transforms each vertex by applying the matrix chain Projection \(\times\) View \(\times\) Model, and passes the transformed attributes (world-space position, normal, color) to the next stages. The transformed vertices are then grouped into triangles during the assembly of primitives, using the connectivity table sent from the CPU.

The rasterization takes each triangle projected in 2D and determines the set of pixels it covers. For each covered pixel (called a fragment), the attributes of the triangle’s three vertices are interpolated barycentrically. The fragment shader receives these interpolated attributes and computes the final color of the fragment by applying the Phong illumination model. The depth test (Z-buffer) then compares the fragment’s depth with the value stored for that pixel: only the fragment closest to the camera is kept, the others are discarded. The result of this sequence of steps is the final image, stored in the framebuffer and displayed on the screen.

The full sequence of these steps is summarized in the diagram below.

Vertex shader : transforms the positions and normals, transmits the attributes to the fragment shader.

#version 330 core

layout (location = 0) in vec3 vertex_position;

layout (location = 1) in vec3 vertex_normal;

layout (location = 2) in vec3 vertex_color;

out struct fragment_data {

vec3 position;

vec3 normal;

vec3 color;

} fragment;

uniform mat4 model;

uniform mat4 view;

uniform mat4 projection;

void main() {

vec4 position = model * vec4(vertex_position, 1.0);

mat4 modelNormal = transpose(inverse(model));

vec4 normal = modelNormal * vec4(vertex_normal, 0.0);

fragment.position = position.xyz;

fragment.normal = normal.xyz;

fragment.color = vertex_color;

gl_Position = projection * view * position;

}Note: the normal is transformed by the transpose of the inverse of

the Model matrix (transpose(inverse(model))), and not by

the Model matrix directly. Indeed, if the Model matrix contains a

non-uniform scaling, the direct multiplication would distort the normals

and the shading would be incorrect.

Fragment shader : calculates Phong illumination for each fragment.

#version 330 core

in struct fragment_data {

vec3 position;

vec3 normal;

vec3 color;

} fragment;

uniform vec3 light_position;

uniform vec3 light_color;

layout(location = 0) out vec4 FragColor;

void main() {

vec3 N = normalize(fragment.normal);

vec3 L = normalize(light_position - fragment.position);

float diffuse_magnitude = max(dot(N, L), 0.0);

vec3 c = 0.1 * fragment.color * light_color; // ambiante

c = c + 0.7 * diffuse_magnitude * fragment.color * light_color; // diffuse

// + composante spéculaire (omise pour simplifier)

FragColor = vec4(c, 1.0);

}The variables fragment.position,

fragment.normal and fragment.color are

automatically interpolated barycentrically by the GPU

between the values of the three vertices of the current triangle.

A mesh (mesh) is a set of polygons sharing vertices. It is described by :

Depending on the type of polygons used, we distinguish :

These three categories are illustrated below.

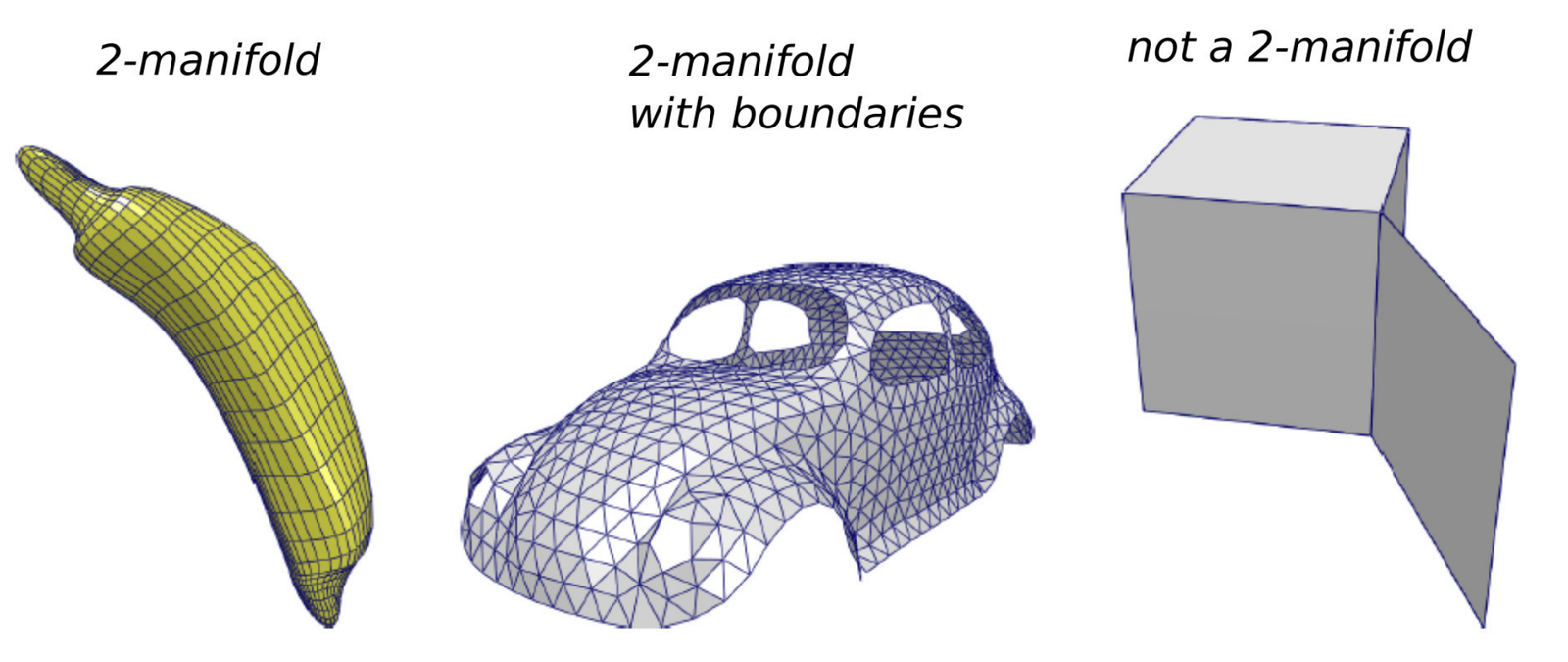

A mesh represents a manifold (manifold) if each edge is shared by at most 2 faces, as illustrated in the following figure. This property guarantees that the surface is locally equivalent to a plane (or a half-plane at the boundary), which is necessary for most mesh processing algorithms.

The standard encoding of a triangular mesh in C++ uses two arrays:

struct vec3 { float x, y, z; };

struct int3 { int i, j, k; };

std::vector<vec3> position; // positions des sommets

std::vector<int3> connectivity; // indices des faces (triplets)The use of std::vector guarantees the memory

contiguity, essential for efficient transfer to the GPU.

Example: tetrahedron

std::vector<vec3> position = { {0,0,0}, {1,0,0}, {0,1,0}, {0,0,1} };

std::vector<int3> connectivity = { {0,1,2}, {0,1,3}, {0,2,3}, {1,2,3} };Example: planar mesh

std::vector<vec3> position = { p0, p1, p2, p3, p4, p5, p6, p7, p8 };

std::vector<int3> connectivity = { {0,1,3}, {1,2,3}, {0,3,4}, {3,5,4},

{2,5,3}, {2,6,5}, {5,6,7}, {5,7,8}, {4,5,8} };The following diagram shows the correspondence between the indices and the geometry of the mesh.

Note on orientation : the triplets \((0, 1, 3)\), \((1, 3, 0)\) and \((3, 0, 1)\) are equivalent (cyclic permutation). By contrast, \((0, 1, 3)\) has an orientation inverse to \((3, 1, 0)\), \((1, 0, 3)\) or \((0, 3, 1)\). The orientation determines the direction of the normal and thus the sense of the face (see previous chapter).

The typical workflow for displaying a mesh with OpenGL follows these steps:

mesh_drawable variable

(shared between methods).mesh structure containing

the data arrays in RAM (CPU).mesh to the

mesh_drawable in VRAM (GPU).draw() which activates the shader, sends the

uniforms and launches the rendering.This flow is summarized in the diagram below.

In practice, each vertex carries additional attributes beyond its position: normals, texture coordinates, colors, etc.

std::vector<vec3> position = { p_0, p_1, p_2, p_3 };

std::vector<vec3> normal = { n_0, n_1, n_2, n_3 };

std::vector<vec3> color = { c_0, c_1, c_2, c_3 };

std::vector<vec2> uv = { uv_0, uv_1, uv_2, uv_3 };

std::vector<int3> connectivity = { {0,1,2}, {0,1,3}, {0,2,3}, {1,2,3} };with \(p_i = (x_i, y_i, z_i)\), \(n_i = (n_{x_i}, n_{y_i}, n_{z_i})\) with \(\|n_i\| = 1\), and \(c_i = (r_i, g_i, b_i)\). The following figure represents these different attributes on a mesh.

Key points:

Sometimes, it is necessary to duplicate a vertex when it occupies the same position but with different attributes depending on the face considered. The most common case is that of sharp edges.

Consider a vertex located at a position \(p_A\) where two groups of faces meet at a right angle. If one desires a rendering with a crisp edge (not smoothed), the vertex must carry two different normals, one for each group of faces. Since the connectivity table is unique, one must create two distinct entries in the vertex array, sharing the same position but with different normals.

// Avant duplication : sommet v4 à la position pA avec une seule normale

std::vector<vec3> position = { p0, p1, p2, p3, pA, pB };

std::vector<vec3> normal = { n0, n0, n1, n1, n2, n2 };

// Après duplication : v4 et v6 partagent pA mais avec des normales différentes

std::vector<vec3> position = { p0, p1, p2, p3, pA, pB, pA, pB };

std::vector<vec3> normal = { n0, n0, n1, n1, n1, n1, n0, n0 };The principle is visible in the following diagram.

For a cube, each geometric vertex is shared by 3 perpendicular faces. Since each face requires a different normal, each vertex is duplicated 3 times, i.e., \(8 \times 3 = 24\) vertices instead of 8.

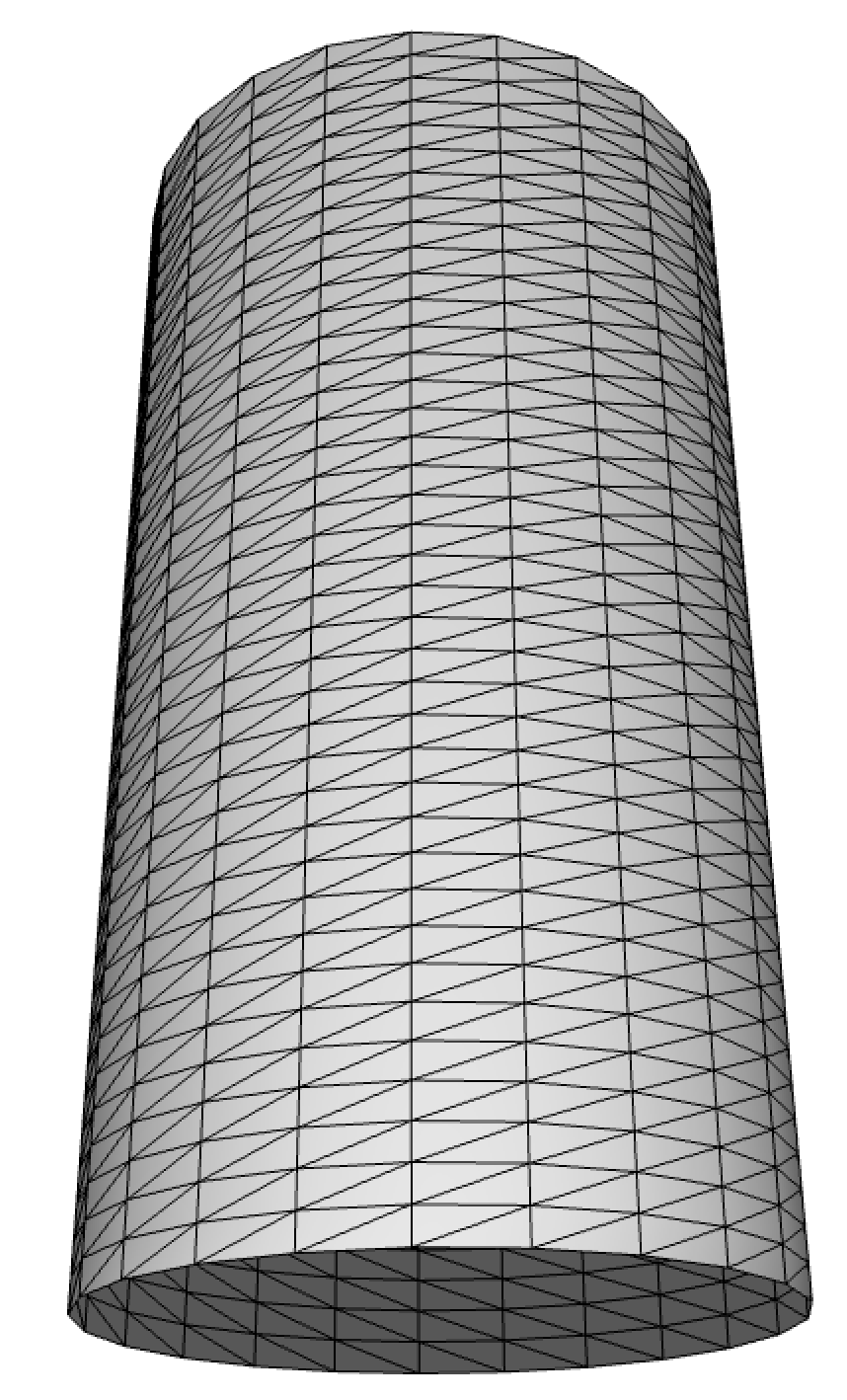

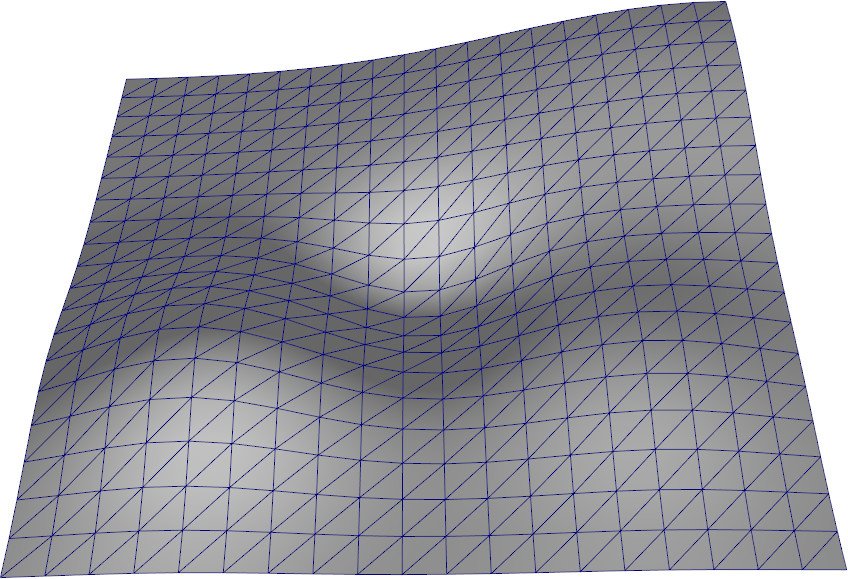

A common case of meshing is the discretization of parametric surfaces.

Let a surface \(S(u, v) = (S_x(u,v), \, S_y(u,v), \, S_z(u,v))\) with \((u, v) \in [0, 1]^2\), uniformly sampled on a grid \(N_u \times N_v\), the structure of which is shown below.

The construction of the mesh is done in two steps :

Vertex positions :

for(int ku = 0; ku < Nu; ku++) {

for(int kv = 0; kv < Nv; kv++) {

float u = ku / (Nu - 1.0f);

float v = kv / (Nv - 1.0f);

S.position[kv + Nv * ku] = { Sx(u,v), Sy(u,v), Sz(u,v) };

}

}Connectivity (two triangles per grid cell) :

for(int ku = 0; ku < Nu - 1; ku++) {

for(int kv = 0; kv < Nv - 1; kv++) {

unsigned int idx = kv + Nv * ku;

uint3 triangle_1 = { idx, idx + 1 + Nv, idx + 1 };

uint3 triangle_2 = { idx, idx + Nv, idx + 1 + Nv };

S.connectivity.push_back(triangle_1);

S.connectivity.push_back(triangle_2);

}

}This scheme applies to many surfaces, as shown by the following examples.

Exemples de surfaces paramétriques : cylindre (gauche) et terrain (droite).

Normals are not always provided (files without normals, procedural meshes, real-time deformations). It is then necessary to calculate from geometry.

The standard method consists in computing the unweighted average of the normals of the neighboring triangles of each vertex :

\[ n_i = \frac{\sum_{t \in \mathcal{N}_i} n_t}{\left\| \sum_{t \in \mathcal{N}_i} n_t \right\|} \] This process is illustrated below.

Implementation :

std::vector<vec3> normal(position.size(), {0,0,0});

// Accumulation par triangle

for(int t = 0; t < connectivity.size(); t++) {

int a = connectivity[t][0];

int b = connectivity[t][1];

int c = connectivity[t][2];

vec3 n = cross(position[b] - position[a], position[c] - position[a]);

n = normalize(n);

normal[a] += n;

normal[b] += n;

normal[c] += n;

}

// Normalisation finale

for(int k = 0; k < normal.size(); k++) {

normal[k] = normalize(normal[k]);

}If vertices are duplicated (sharp edges), the computed normals will naturally differ for each copy, since they do not share the same neighboring triangles, as can be seen in the following figure.

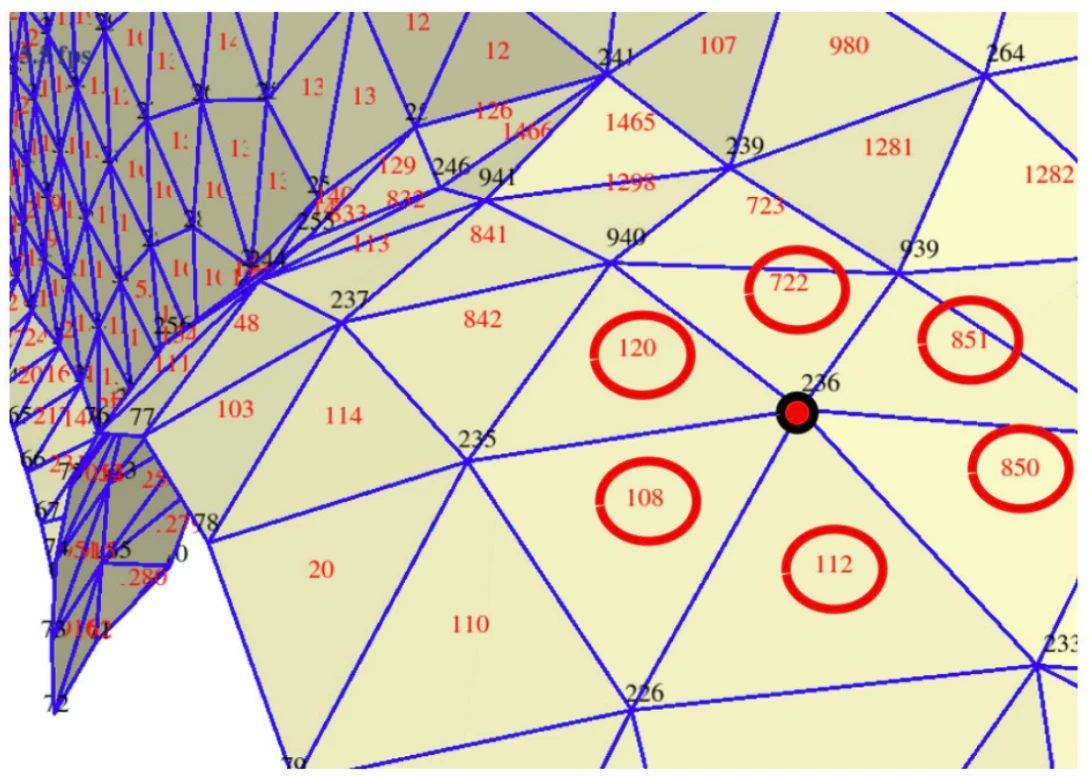

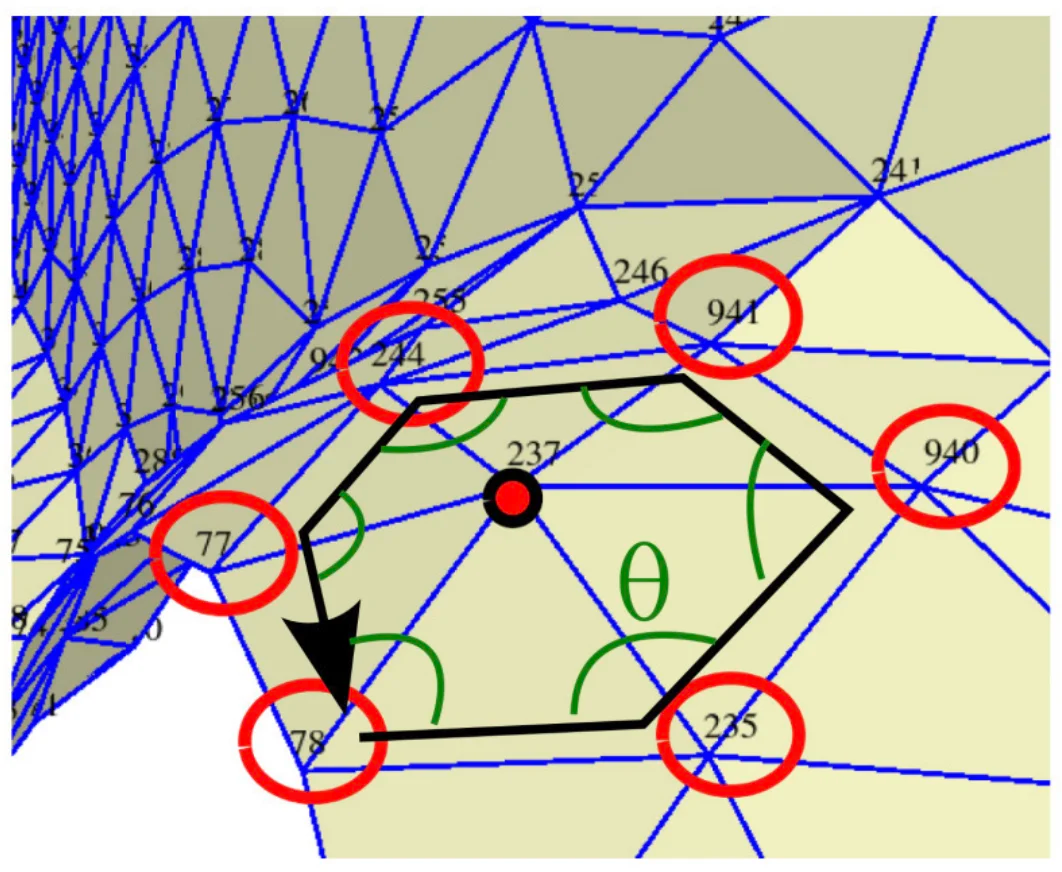

The 1-ring of a vertex is the set of triangles that share this vertex. This neighborhood structure is useful for many algorithms (normal calculation, smoothing, subdivision, etc.).

std::vector<std::vector<int>> one_ring;

one_ring.resize(position.size());

for(int k_tri = 0; k_tri < connectivity.size(); k_tri++) {

for(int k = 0; k < 3; k++) {

one_ring[connectivity[k_tri][k]].push_back(k_tri);

}

}For example:

one_ring[235] = {842, 120, 108, 110, 20, 114};

one_ring[236] = {108, 112, 851, 850, 120, 722};The figure below illustrates this notion of neighborhood.

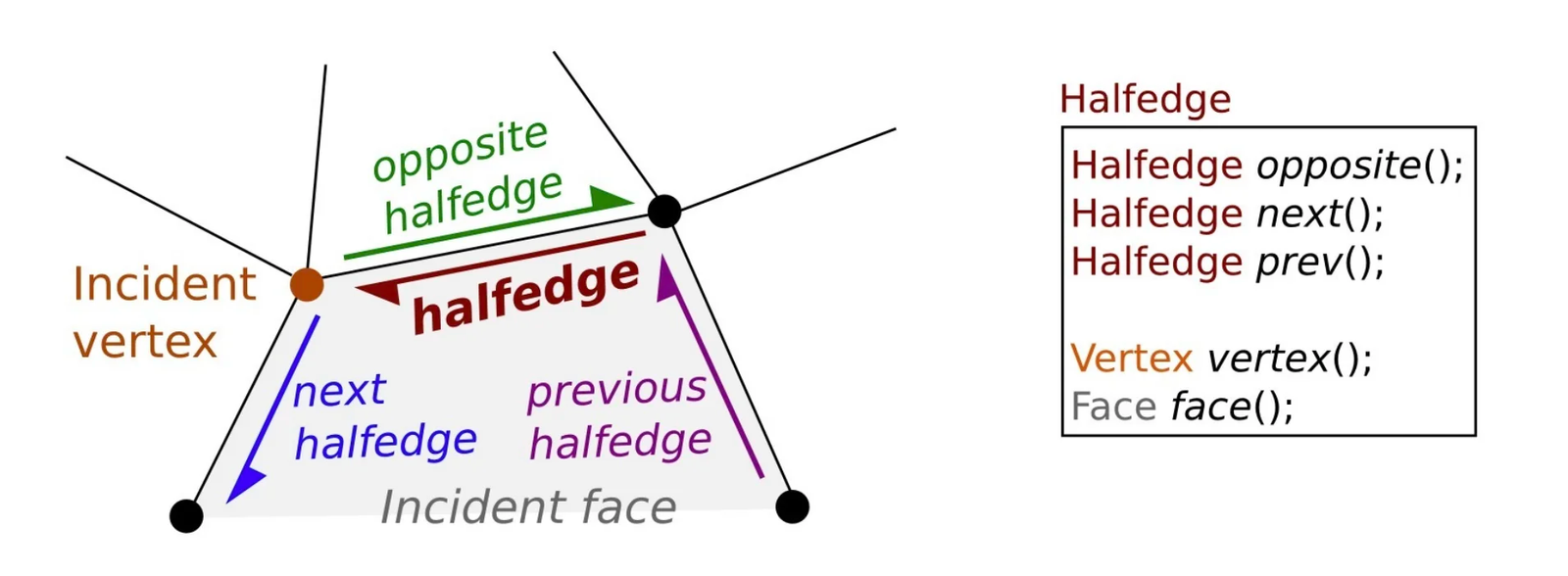

The half-edge (half-edge) structure is a data structure richer than the simple connectivity table. It encodes the edges in an oriented manner: each edge is represented by two half-edges with opposite directions, as shown in the diagram below. Faces appear as loops along the half-edges.

Advantages :

Limitations :

Example with CGAL :

typedef CGAL::Cartesian<double> Kernel;

typedef CGAL::Polyhedron_3<Kernel> Polyhedron;

int main() {

Polyhedron mesh;

std::ifstream stream("mesh.off");

stream >> mesh;

int face_number = 0;

for(auto it_face = mesh.facets_begin();

it_face != mesh.facets_end(); ++it_face)

{

auto halfedge = it_face->halfedge();

auto const halfedge_end = halfedge;

do {

const auto p = halfedge->vertex()->point();

std::cout << p << std::endl;

halfedge = halfedge->next();

} while(halfedge != halfedge_end);

face_number++;

}

}The objective of textures is to provide a color detail level finer than the geometry. A triangle can have a uniform color or be interpolated between its vertices, but this remains limited. Textures allow mapping a detailed 2D image onto the 3D surface.

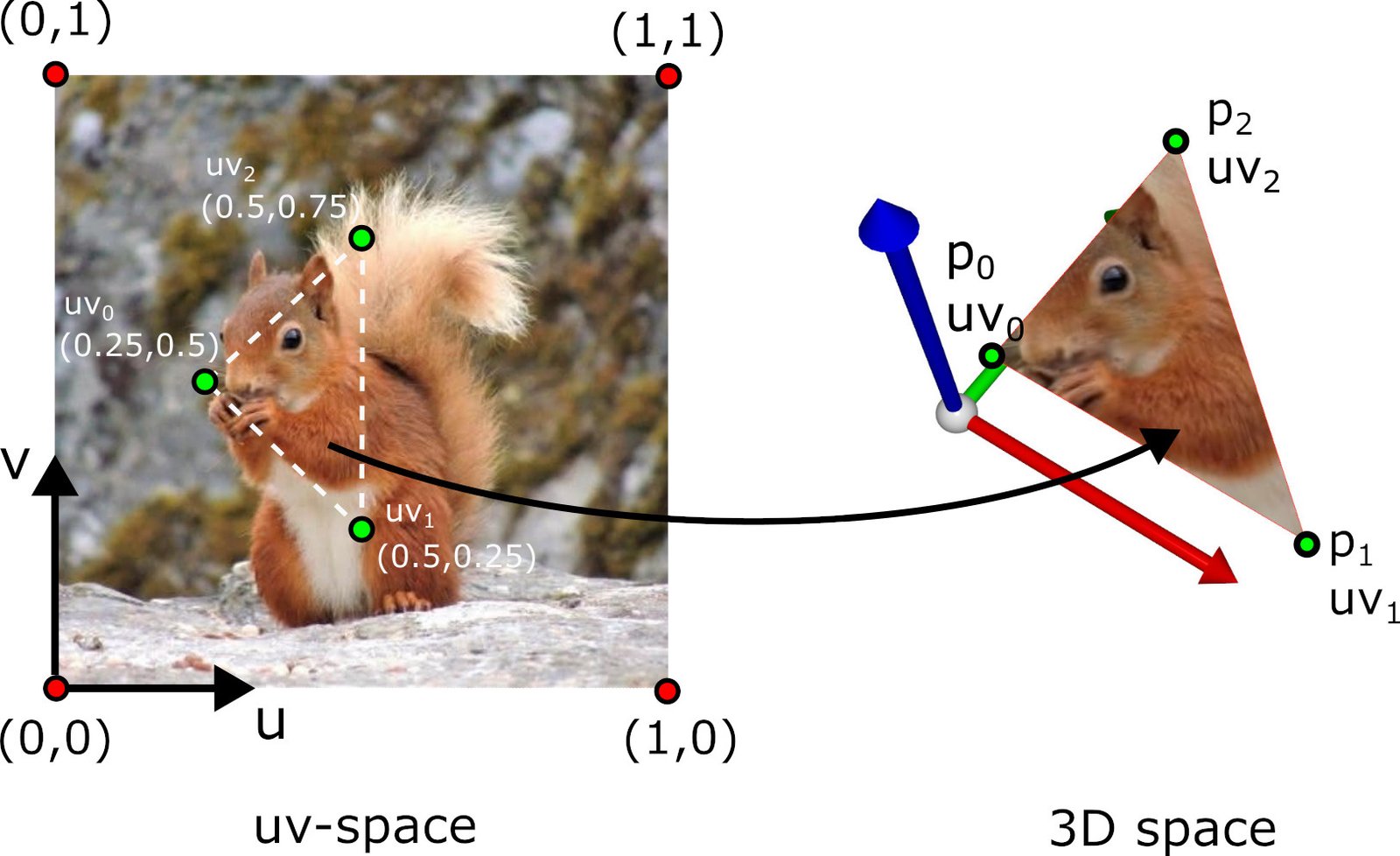

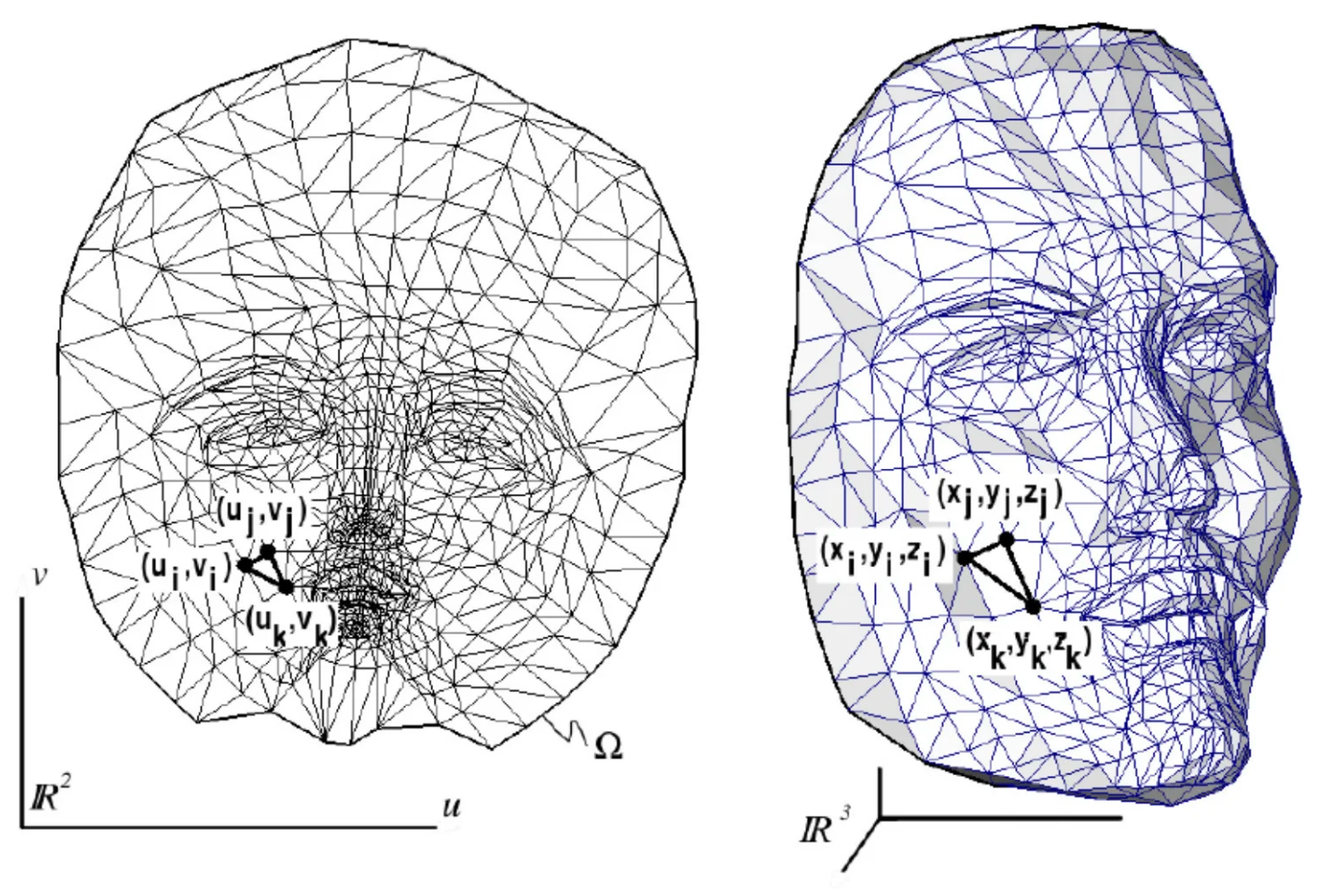

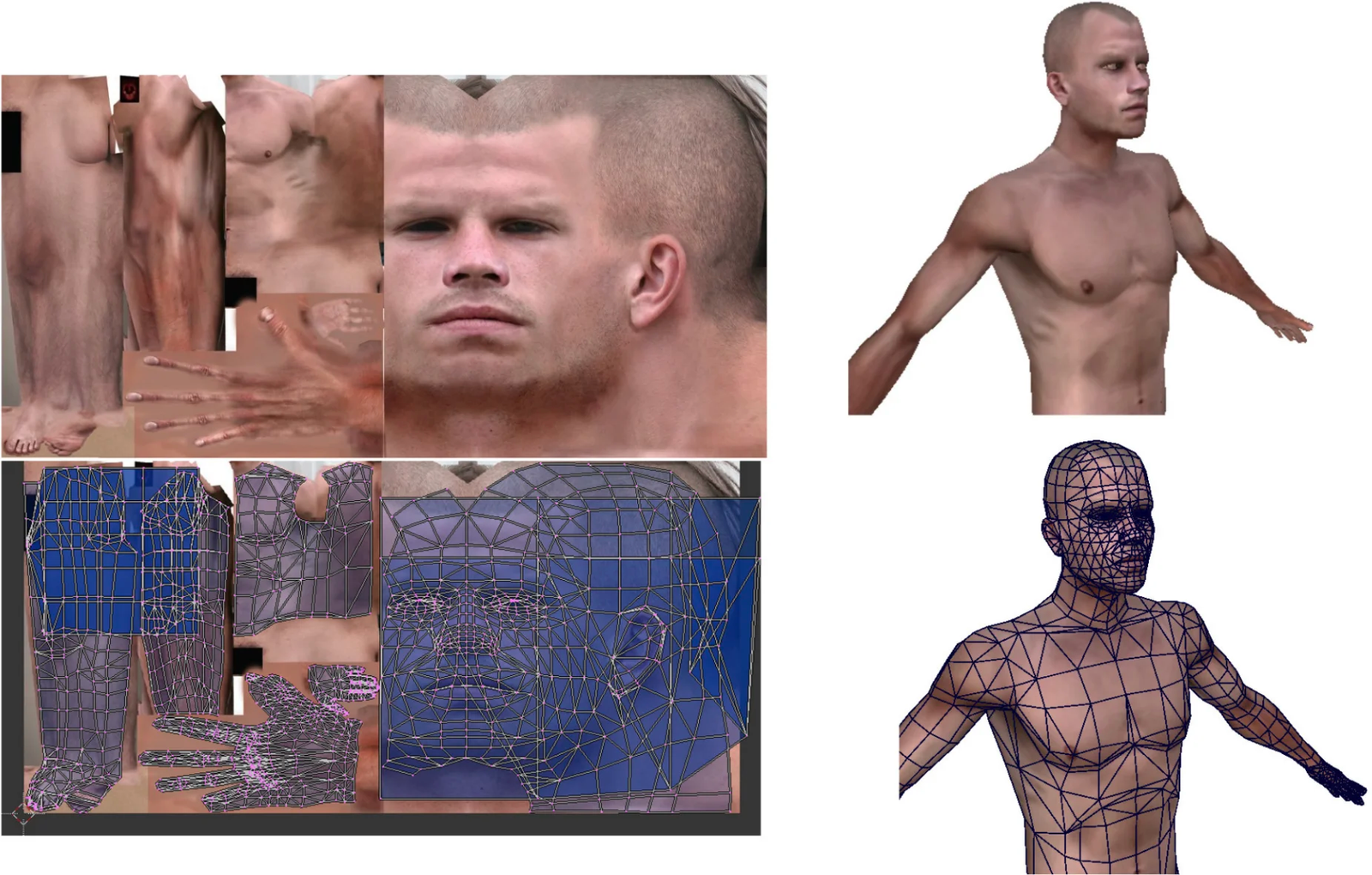

The classic case is the UV-mapping: a 2D image (the texture) is associated with the surface 3D via texture coordinates \((u, v)\) (also denoted \((s, t)\)).

The mechanism is illustrated below.

Principe de l'UV-mapping : association entre coordonnées 2D de la texture et surface 3D.

The convention is that \((0, 0)\) corresponds to the bottom-left corner of the image and \((1, 1)\) to the top-right corner.

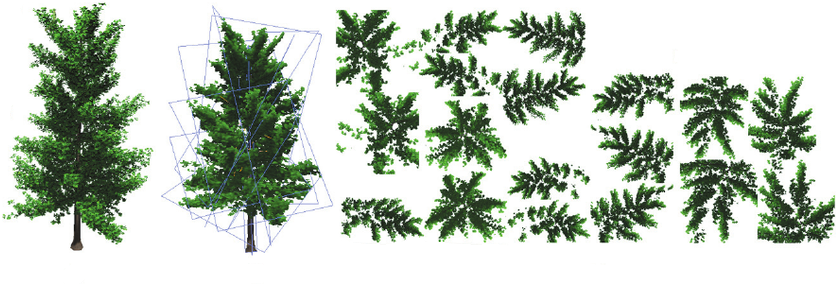

For complex models, UV unfolding is performed in pieces (patches or islands). The process comprises the following steps:

Patch cutting: the surface is cut along seams (seams) chosen by the artist or an automatic algorithm. Seams are placed in less visible areas (creases, back of the object).

Unfolding: each patch is unfolded in the 2D plane while minimizing deformations. Classical algorithms include ABF (Angle-Based Flattening), LSCM (Least Squares Conformal Maps) and Slim (Scalable Locally Injective Mappings).

Packing: the unfolded patches are arranged in the space \([0, 1]^2\) by maximizing space occupancy (to avoid wasting the texture resolution).

Painting: the artist paints the texture directly in the unfolded 2D space, or uses 3D painting tools (such as Substance Painter) that project directly onto the surface.

The seams between patches correspond to the places where the texture may exhibit discontinuities (the vertex at the seam is duplicated with different UV coordinates on each side). An example of unfolding is visible below.

UV mapping consists of “unfolding” the 3D surface onto a 2D plane. This unfolding necessarily introduces deformations in length and in angle for any surface whose Gaussian curvature is nonzero.

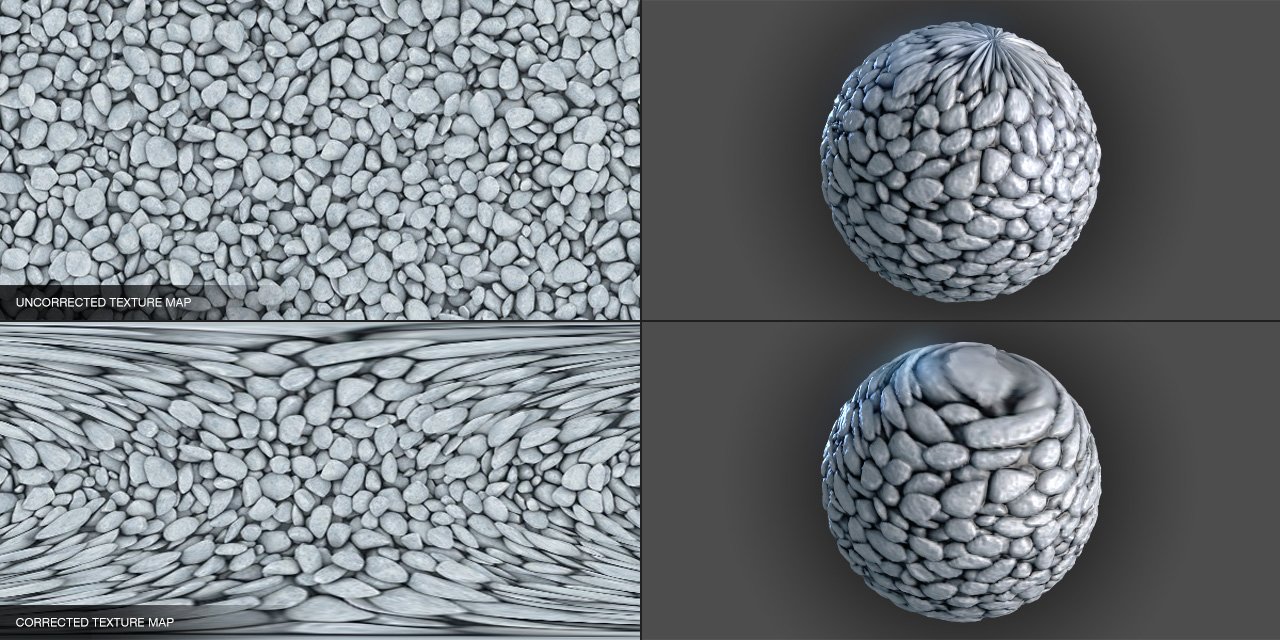

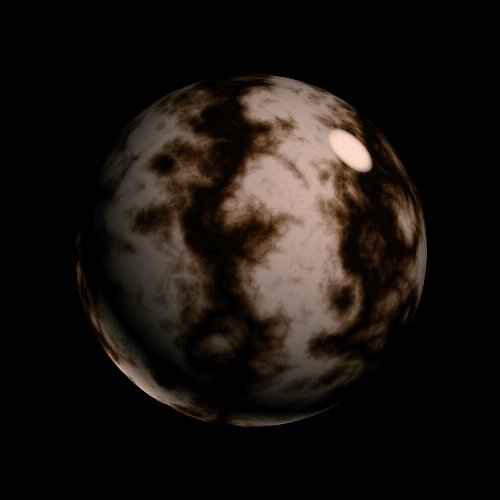

In particular, it is impossible to map a planar image onto a sphere without distortion. This is a fundamental result of differential geometry: Gaussian curvature is an intrinsic invariant of the surface (Gauss’s theorema egregium). A sphere has constant positive Gaussian curvature (\(K = 1/R^2\)), while a plane has zero curvature (\(K = 0\)). No distortion without stretching can transform one into the other.

Only the developable surfaces (Gaussian curvature zero, such as cylinders and cones) can be unfolded without distortion. In practice, one seeks to minimize deformations during UV unfolding, accepting a trade-off between the distortion of angles (conformal) and the distortion of areas (authalic). The following figure shows a typical case of deformation on a sphere.

Déformation lors du placage d'une texture sur une sphère : étirement aux pôles.

Beyond color (diffuse map), textures are used to store many types of information about the surface:

Texture coordinates are stored as a vertex attribute, in the same way as positions and normals:

std::vector<vec2> uv = { uv_0, uv_1, uv_2, ... };On the C++/OpenGL side, the texture is loaded and sent to the GPU:

// Chargement de l'image

int width, height, channels;

unsigned char* data = stbi_load("texture.png", &width, &height, &channels, 4);

// Création de la texture OpenGL

GLuint texture_id;

glGenTextures(1, &texture_id);

glBindTexture(GL_TEXTURE_2D, texture_id);

glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA, width, height, 0,

GL_RGBA, GL_UNSIGNED_BYTE, data);

// Activation dans le fragment shader (unité de texture 0)

glActiveTexture(GL_TEXTURE0);

glBindTexture(GL_TEXTURE_2D, texture_id);

glUniform1i(glGetUniformLocation(shader, "texture_image"), 0);During rendering, the fragment shader receives the texture

coordinates \((u, v)\) that are

barycentrically interpolated between the three vertices of the current

triangle. It then reads the color from the texture image at that

position using the texture() function:

uniform sampler2D texture_image; // la texture, envoyée depuis le CPU

in vec2 fragment_uv; // coordonnées interpolées

out vec4 FragColor;

void main() {

vec4 color = texture(texture_image, fragment_uv);

FragColor = color;

}The variable sampler2D represents a reference to a

texture stored in VRAM. The function texture(sampler, uv)

performs the sampling: it converts the normalized

coordinates \((u, v) \in [0, 1]^2\) to

pixel coordinates in the image, and then returns the corresponding

color.

The interpolated coordinates \((u, v)\) rarely land exactly at the center of a texture pixel (called a texel). The GPU must therefore interpolate between neighboring texels. This process is called texture filtering. It occurs in two cases:

The most common filtering modes are :

GL_NEAREST) : selects the

nearest texel. Pixelated rendering but fast. Useful for a pixel-art

style.GL_LINEAR) : bilinearly

interpolates between the 4 closest texels. Smooth rendering, standard

for magnification.GL_LINEAR_MIPMAP_LINEAR)

: bilinear interpolation + interpolation between two mipmap levels (see

below). Standard for minification.OpenGL configuration:

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR_MIPMAP_LINEAR);Mipmaps are precomputed versions of the texture at decreasing resolutions (each level halves the resolution). During minification, the GPU selects the mipmap level whose resolution is most suitable for the size of the triangle on screen, and interpolates if necessary.

For a texture of \(1024 \times 1024\), the mipmap chain contains the levels: \(1024^2\), \(512^2\), \(256^2\), …, \(2^2\), \(1^2\) (i.e., 11 levels). The total memory footprint is only \(\frac{4}{3}\) of the original texture.

Mipmaps reduce the aliasing (flickering of textures viewed from afar) and improve performance (the distant textures are read at lower-resolution levels, better utilized by the GPU’s memory cache).

Automatic generation in OpenGL :

glGenerateMipmap(GL_TEXTURE_2D);When texture coordinates exit the interval \([0, 1]\), the behavior depends on the wrapping mode:

GL_REPEAT) : the texture

repeats indefinitely. The coordinate \(u =

2.3\) is interpreted as \(u =

0.3\). Useful for tiling textures (bricks, grass, etc.).GL_MIRRORED_REPEAT) :

the texture repeats while alternating its orientation, avoiding visible

seams at the edges.GL_CLAMP_TO_EDGE) :

coordinates outside \([0, 1]\) are

clamped to the edge of the texture. Out-of-range pixels take the color

of the closest edge.OpenGL configuration:

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_REPEAT);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_REPEAT);All textures (color, normals, specular, etc.) share the same UV

coordinates and use the same sampling mechanism. They are simply read

from different sampler2Ds in the fragment shader:

uniform sampler2D diffuse_map;

uniform sampler2D normal_map;

uniform sampler2D specular_map;

void main() {

vec3 color = texture(diffuse_map, fragment.uv).rgb;

vec3 normal = texture(normal_map, fragment.uv).rgb * 2.0 - 1.0;

float spec = texture(specular_map, fragment.uv).r;

// ...

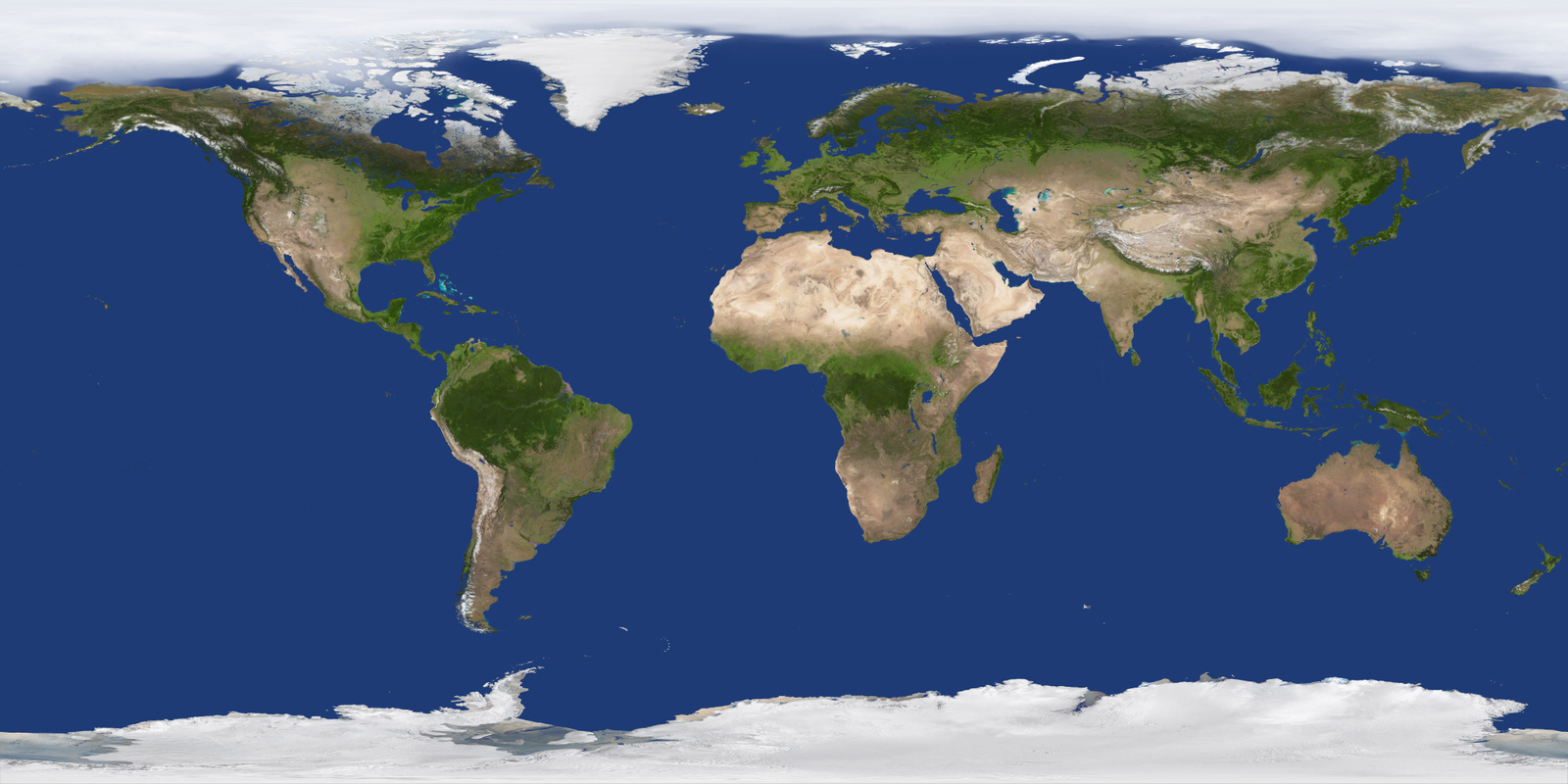

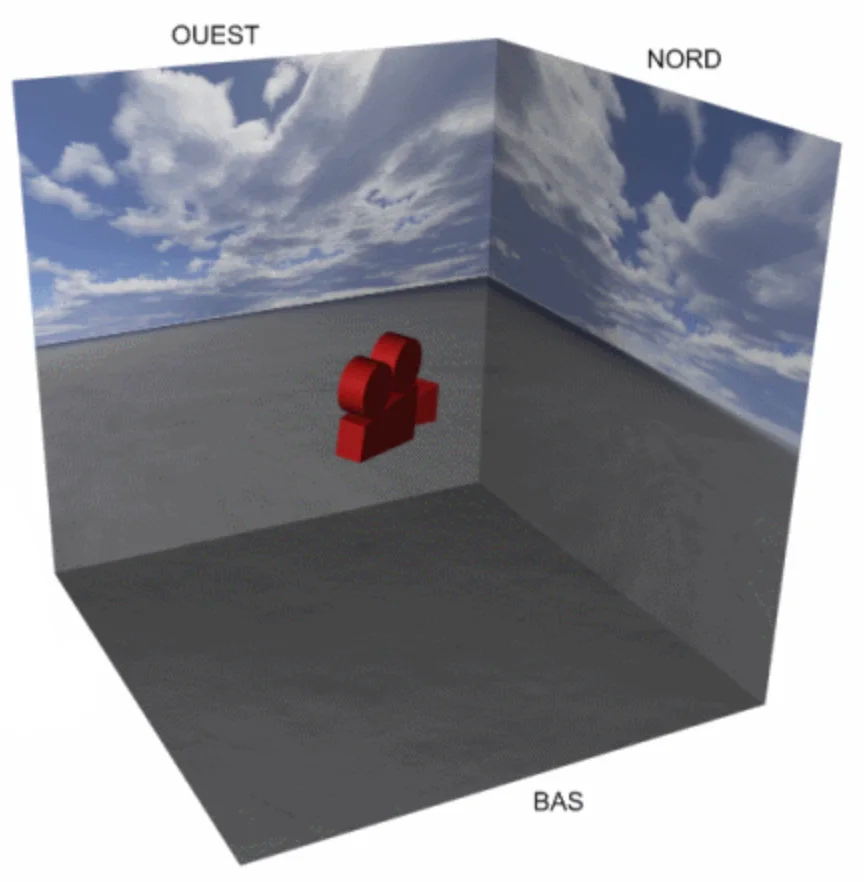

}A skybox is a textured box representing the distant environment (sky, landscape). It is always centered on the camera’s position, which gives the impression of an infinite landscape, as shown below.

The implementation principle is as follows:

void display() {

glDepthMask(GL_FALSE); // désactiver l'écriture dans le depth buffer

draw(skybox, environment);

glDepthMask(GL_TRUE); // réactiver l'écriture

// dessiner les autres objets ...

}The environment mapping simulates the reflection of the environment (skybox) on a shiny surface. This gives the impression that the object reflects the world around it.

The principle, illustrated below, is to compute, for each fragment, the reflection direction of the view vector with respect to the normal, and then read the corresponding color from the skybox texture.

vec3 V = normalize(camera_position - fragment.position);

vec3 R_skybox = reflect(-V, N);

vec4 color_env = texture(image_skybox, R_skybox);The normal mapping consists of modifying the surface normals using an image (the normal map) to simulate geometric details finer than the triangles of the mesh. The real geometry of the triangle does not change: only the normal used in the illumination calculation is modified, which yields the illusion of relief visible in the following comparison.

The normals of a normal map are not stored in world coordinates (which would depend on the object’s orientation), but in a frame local to the triangle called the tangent space \((T, B, N)\) :

This frame is defined by the texture coordinates \((u, v)\) and the positions of the triangle’s vertices:

\[ \begin{cases} p_0 - p_1 = (u_0 - u_1) \, T + (v_0 - v_1) \, B \\ p_2 - p_1 = (u_2 - u_1) \, T + (v_2 - v_1) \, B \\ N = T \times B \end{cases} \] This system of two vector equations (i.e., 6 scalar equations) allows solving \(T\) and \(B\) (6 unknowns). In matrix notation, for the x components, for example:

\[ \begin{pmatrix} T_x \\ B_x \end{pmatrix} = \frac{1}{(u_0 - u_1)(v_2 - v_1) - (u_2 - u_1)(v_0 - v_1)} \begin{pmatrix} v_2 - v_1 & -(v_0 - v_1) \\ -(u_2 - u_1) & u_0 - u_1 \end{pmatrix} \begin{pmatrix} (p_0 - p_1)_x \\ (p_2 - p_1)_x \end{pmatrix} \] And likewise for the components \(y\) and \(z\). The vectors \(T\) and \(B\) are then normalized.

Each pixel of the normal map stores a perturbed normal with components \((r, g, b) \in [0, 1]^3\), encoding the components in tangent space to [-1, 1]:

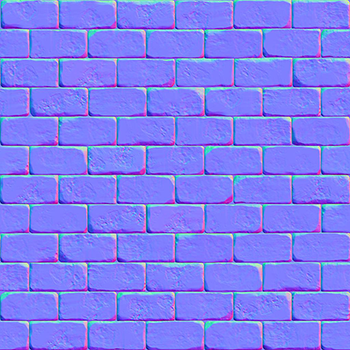

\[ n_{\text{tangent}} = 2 \begin{pmatrix} r \\ g \\ b \end{pmatrix} - \begin{pmatrix} 1 \\ 1 \\ 1 \end{pmatrix} \] The blue component \(b\) corresponds to the direction of the geometric normal \(N\). That’s why normal maps appear bluish: most normals are close to \((0, 0, 1)\) in tangent space, which yields \((0.5, 0.5, 1.0)\) in RGB. This encoding can be observed in the images below.

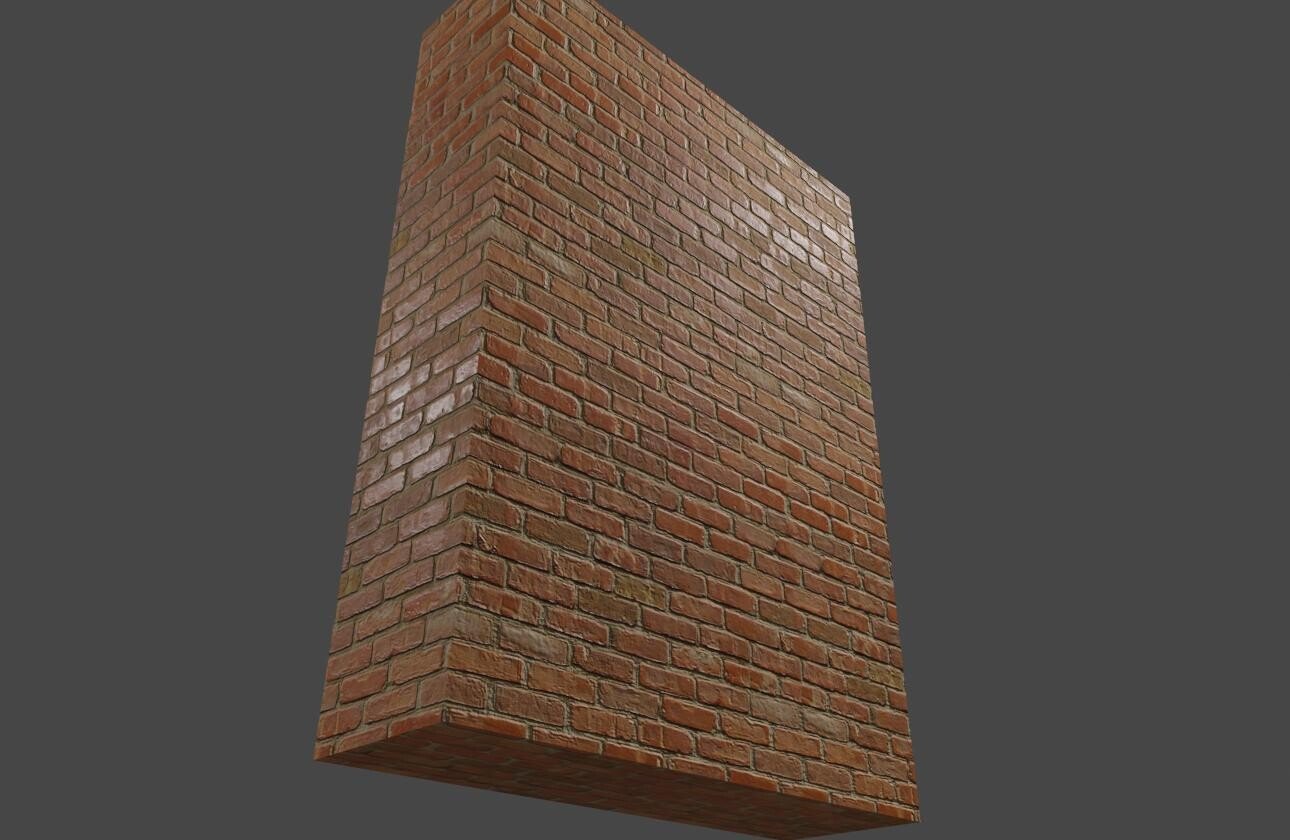

Normal map : les normales sont encodées en couleur dans l'espace tangent (gauche). Application sur un mur en briques (droite).

The final normal vector in world coordinates is obtained by a change of basis:

\[ n_{\text{world}} = \text{TBN} \times n_{\text{tangent}} = T \cdot n_x + B \cdot n_y + N \cdot n_z \] where \(\text{TBN} = (T \mid B \mid N)\) is the 3×3 matrix whose columns are the vectors of the tangent frame.

Vertex shader: calculation of the tangent frame and passing to the fragment shader.

layout(location = 0) in vec3 vertex_position;

layout(location = 1) in vec3 vertex_normal;

layout(location = 2) in vec2 vertex_uv;

layout(location = 3) in vec3 vertex_tangent;

out struct fragment_data {

vec3 position;

vec2 uv;

mat3 TBN;

} fragment;

uniform mat4 model;

uniform mat4 view;

uniform mat4 projection;

void main() {

vec4 pos_world = model * vec4(vertex_position, 1.0);

mat3 normalMatrix = mat3(transpose(inverse(model)));

vec3 T = normalize(normalMatrix * vertex_tangent);

vec3 N = normalize(normalMatrix * vertex_normal);

vec3 B = cross(N, T);

fragment.position = pos_world.xyz;

fragment.uv = vertex_uv;

fragment.TBN = mat3(T, B, N);

gl_Position = projection * view * pos_world;

}Fragment shader : sampling the normal map and illumination calculation.

in struct fragment_data {

vec3 position;

vec2 uv;

mat3 TBN;

} fragment;

uniform sampler2D normal_map;

uniform vec3 light_position;

out vec4 FragColor;

void main() {

// Lire la normale dans la normal map et la convertir de [0,1] à [-1,1]

vec3 n_tangent = texture(normal_map, fragment.uv).rgb * 2.0 - 1.0;

// Transformer vers l'espace monde

vec3 N = normalize(fragment.TBN * n_tangent);

// Illumination avec la normale perturbée

vec3 L = normalize(light_position - fragment.position);

float diffuse = max(dot(N, L), 0.0);

// ...

}The advantage of the tangent space is that the same normal map can be reused on different objects or different parts of the same object, regardless of their orientation in the world.

Parallax mapping goes beyond texture coordinates themselves according to a height map (height map). While normal mapping only modifies the illumination (the silhouette remains flat), parallax mapping creates an impression of geometric displacement: the high areas appear to come forward and the low areas recede, especially when viewing the surface at a grazing angle.

Consider a fragment on a flat surface. Without parallax mapping, we would sample the texture at the coordinates \((u, v)\) for this fragment. But if the surface actually had relief, the viewing ray would have intersected the surface at a different point, horizontally offset. Parallax mapping approximates this offset, as shown in the diagram below.

Let \(V\) be the view vector expressed in tangent space and \(h\) the height read from the height map at the current point. The texture coordinate offset is:

\[ (u', v') = (u, v) + h \cdot \frac{(V_x, V_y)}{V_z} \] The division by \(V_z\) amplifies the offset at grazing angles (when \(V_z\) is small), which corresponds to the expected physical behavior: parallax is more noticeable at oblique angles.

Fragment shader with parallax mapping :

in struct fragment_data {

vec3 position;

vec2 uv;

mat3 TBN;

} fragment;

uniform sampler2D diffuse_map;

uniform sampler2D height_map;

uniform sampler2D normal_map;

uniform vec3 camera_position;

uniform float height_scale; // contrôle l'amplitude du relief (~0.05-0.1)

out vec4 FragColor;

void main() {

// Vecteur de vue dans l'espace tangent

vec3 V_world = normalize(camera_position - fragment.position);

vec3 V_tangent = normalize(transpose(fragment.TBN) * V_world);

// Lire la hauteur et calculer le décalage

float h = texture(height_map, fragment.uv).r;

vec2 uv_offset = h * height_scale * V_tangent.xy / V_tangent.z;

vec2 uv_parallax = fragment.uv + uv_offset;

// Utiliser les coordonnées décalées pour la texture et la normal map

vec3 color = texture(diffuse_map, uv_parallax).rgb;

vec3 n_tangent = texture(normal_map, uv_parallax).rgb * 2.0 - 1.0;

vec3 N = normalize(fragment.TBN * n_tangent);

// Illumination avec la normale et la couleur décalées

// ...

}Simple parallax mapping is a first-order approximation that works well for low-relief surfaces. For more pronounced reliefs, it produces artifacts (slippages, distortions). The Steep Parallax Mapping (or Parallax Occlusion Mapping) improves quality by stepping through the view ray step-by-step across the height map:

vec2 steep_parallax(vec2 uv, vec3 V_tangent, float height_scale) {

const int num_steps = 32;

float step_size = 1.0 / float(num_steps);

float current_depth = 0.0;